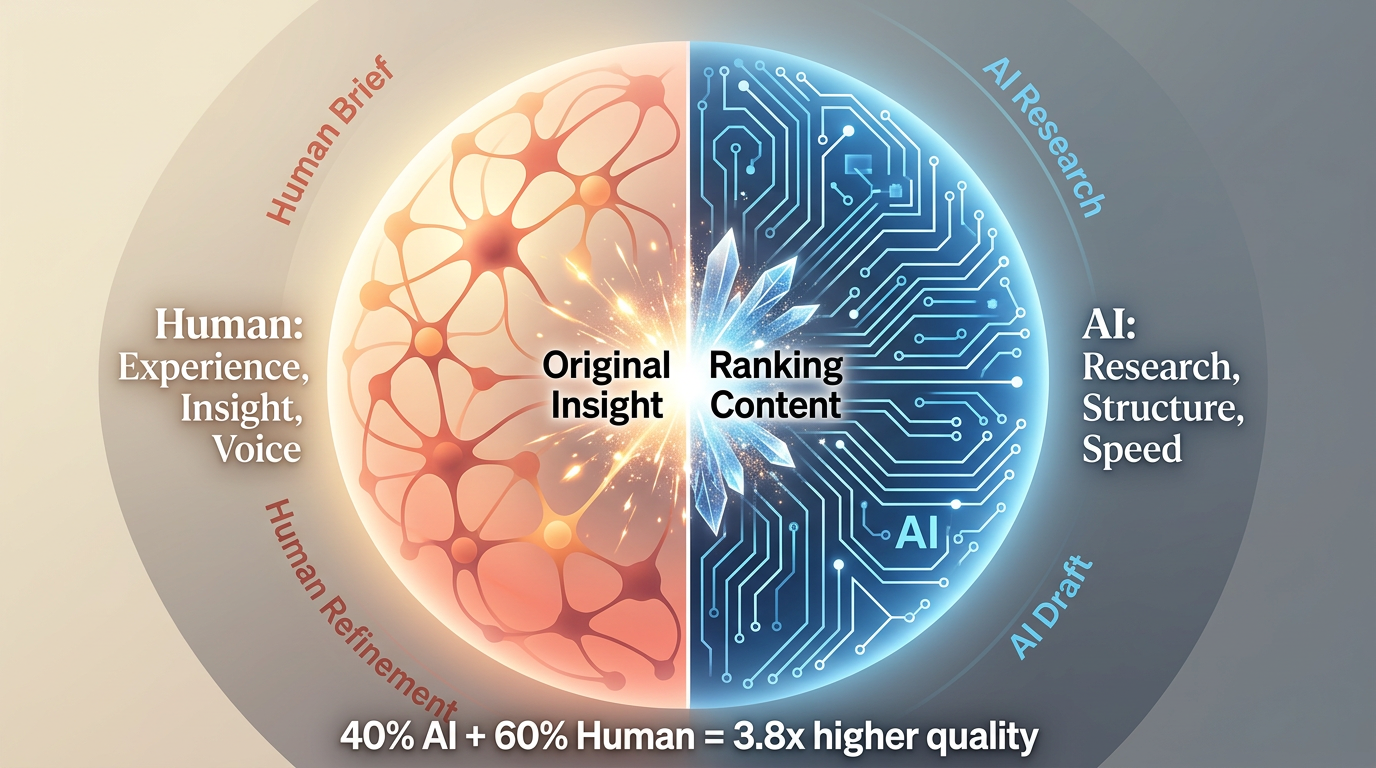

Human-AI collaboration workflows are processes that combine AI automation with human expertise to produce high-quality content efficiently. In 2026, the most successful content teams do not replace humans with AI. Instead, they build workflows where AI handles research and structure while humans add insights, experience, and voice.

Recent data from Content Marketing Institute (2026) shows that teams with structured human-AI workflows produce 2.5x more content at 3.8x higher quality than teams using AI or humans alone.

The Human-AI Sweet Spot

What AI Does Well

AI excels at:

- Research: Analyzing SERPs, extracting patterns, identifying gaps (10x faster than humans)

- Structure: Creating outlines, organizing information, formatting for readability

- Drafting: Generating foundational content, expanding on outlined points

- Data Processing: Summarizing information, extracting statistics, organizing data

- Scalability: Producing consistent output at scale

Time Savings: AI research: 20-30 minutes vs. 2-3 hours manual

What Humans Do Well

Humans excel at:

- Original Insights: Unique perspectives, creative angles, contrarian views

- Personal Experience: "I tested this for 6 months and here's what happened"

- Real Examples: Specific use cases, case studies, customer stories

- Nuance: Understanding context, complexity, edge cases

- Voice and Personality: Making content engaging, relatable, memorable

Quality Impact: Human refinement increases AI citation rate by 47%

The Collaboration Formula

Successful Formula:

- 40-60% AI (research, structure, foundation)

- 40-60% Human (insights, experience, voice)

Why This Balance Works:

- AI provides speed and scale

- Humans provide quality and uniqueness

- Combined: High-quality content at scale

Evidence: Teams using 40-60% AI content see 3.8x higher rankings than 100% human content

Building Effective Collaboration Workflows

Phase 1: AI-First Research

AI Role: Research SERPs, analyze competitors, identify gaps

Human Role: Define research parameters, review findings, set priorities

Process:

- Human defines target topic and keywords

- AI conducts SERP analysis using RankDraft (10-15 minutes)

- AI extracts patterns from top 10 results

- AI identifies content gaps

- Human reviews AI findings and validates insights (5-10 minutes)

- Human sets content priorities and unique angle

Time Investment: 15-25 minutes (vs. 2-3 hours manual)

Output: Research summary and gap analysis

Phase 2: Collaborative Briefing

AI Role: Generate initial brief structure based on research

Human Role: Add unique angle, quality injection points, specific requirements

Process:

- AI generates initial brief from research findings (5-10 minutes)

- Human reviews brief and adds unique angle (10-15 minutes)

- Human adds quality injection points (specific data, examples, insights)

- Human adds platform-specific requirements (Google, Perplexity, ChatGPT, Claude)

- Human sets word count and quality standards

Time Investment: 20-30 minutes (vs. 1-2 hours manual)

Output: Comprehensive content brief (2 pages)

Phase 3: AI-First Drafting

AI Role: Generate first draft based on research and brief

Human Role: Provide prompt guidance, review structure

Process:

- Human generates detailed prompt including research and brief

- AI generates first draft (30-45 minutes)

- Human reviews structure and flow (10-15 minutes)

- Human requests revisions if structure needs adjustment

Time Investment: 45-60 minutes (vs. 4-6 hours manual writing)

Output: First draft (2,000-3,000 words)

Phase 4: Human Refinement (Critical Phase)

AI Role: None (this is human territory)

Human Role: Add insights, experience, voice, and personality

Process:

- Human reads draft and identifies sections needing human touch

- Human adds personal experience and anecdotes (20-30 minutes)

- Human adds original insights and perspectives (20-30 minutes)

- Human adds real examples and case studies (20-30 minutes)

- Human refines tone and voice throughout (20-30 minutes)

Time Investment: 1.5-2 hours

Output: Refined draft with human elements

Quality Impact: This phase increases AI citation rate by 47%

Phase 5: Collaborative Quality Control

AI Role: Check for grammar, spelling, readability scores

Human Role: Fact-check, verify accuracy, ensure quality gates met

Process:

- AI grammar and spelling check (5 minutes)

- Human fact-checks statistics and claims (15-20 minutes)

- Human verifies internal links work (5 minutes)

- Human checks quality gates (10-15 minutes)

- Human approves or requests final revisions

Time Investment: 40-50 minutes

Output: Ready-to-publish content

Workflow Templates

Template 1: Solo Creator (1 Person)

Process:

- Define topic (Human): 5 minutes

- AI SERP research (RankDraft): 15 minutes

- Review research and set angle (Human): 10 minutes

- AI brief generation: 10 minutes

- Refine brief with requirements (Human): 15 minutes

- AI first draft: 30 minutes

- Human refinement: 1.5 hours

- AI grammar check: 5 minutes

- Human quality control: 30 minutes

Total Time: 3 hours per piece

Output: 8-10 pieces/month

Quality: High

Template 2: Small Team (2-5 People)

Roles:

- Strategist: Topic definition and brief refinement

- Researcher: AI research oversight and brief creation

- Writer: AI first draft and human refinement

- Editor: Quality control

Process:

- Strategist defines topic: 5 minutes

- Researcher conducts AI research (RankDraft): 15 minutes

- Researcher creates AI brief: 15 minutes

- Strategist refines brief: 10 minutes

- Writer generates AI first draft: 30 minutes

- Writer adds human refinement: 1.5 hours

- Editor quality control: 45 minutes

Total Time per Piece: 2.5 hours

Total Pieces per Team: 12-20 pieces/month

Quality: High

Template 3: Medium Team (6-15 People)

Roles:

- Content Strategist: Topic definition and strategy

- Content Researchers: AI research and brief creation

- AI-Assisted Writers: First drafts and refinement

- Content Editors: Quality control

- SEO/GEO Specialist: Platform optimization

Process:

- Strategist defines topic: 5 minutes

- Researcher conducts AI research: 15 minutes

- Researcher creates AI brief: 15 minutes

- Writer generates AI first draft: 30 minutes

- Writer adds human refinement: 1.5 hours

- SEO/GEO optimizes: 30 minutes

- Editor quality control: 45 minutes

Total Time per Piece: 2 hours

Total Pieces per Team: 30-60 pieces/month

Quality: High

Common Workflow Mistakes

1. Skipping Human Refinement

Mistake: Publishing AI-generated content without human refinement.

Impact:

- 47% lower AI citation rate

- 61% lower Page 1 rankings

- Generic, low-quality content

Fix: Mandate human refinement. This phase is non-negotiable.

2. Poor Prompting

Mistake: Using generic prompts like "write a blog post about X".

Impact:

- Generic, repetitive content

- Misses research findings

- Doesn't meet quality standards

Fix: Use detailed prompts with research context, brief, and specific requirements.

3. No Quality Gates

Mistake: No defined standards or checklist for content approval.

Impact:

- Inconsistent quality

- Missed requirements

- Low rankings and citations

Fix: Document quality gates. Use checklists. Train team on standards.

4. AI-Only or Human-Only

Mistake: Going all-in on AI or refusing to use AI.

Impact:

- AI-only: Fast but low quality

- Human-only: High quality but slow

Fix: Balance: 40-60% AI + 40-60% human

5. No Workflow Documentation

Mistake: Team doesn't follow documented process. Each person does their own thing.

Impact:

- Inconsistent output

- Training challenges

- Scaling problems

Fix: Document workflows. Train team. Iterate based on feedback.

Measuring Collaboration Success

Efficiency Metrics

Track:

- Time per piece (target: 2-3 hours)

- Pieces produced per person (target: 5-8/month)

- AI vs. human content ratio (target: 40-60% each)

- Workflow adherence (target: 90%+)

Benchmarks:

- Low: 5+ hours/piece, 2-3 pieces/person/month

- Medium: 3-4 hours/piece, 4-6 pieces/person/month

- High: 2-3 hours/piece, 6-8 pieces/person/month

- Excellent: <2 hours/piece, 8+ pieces/person/month

Quality Metrics

Track:

- Page 1 rankings (target: 35-50%)

- AI citations (target: 25-45/month)

- Content approval rate (target: 85-95%)

- Editor revision time (target: <45 minutes)

Benchmarks:

- Low: <15% Page 1, <5 citations/month

- Medium: 15-30% Page 1, 5-20 citations/month

- High: 30-45% Page 1, 20-40 citations/month

- Excellent: 45%+ Page 1, 40+ citations/month

Case Study: Workflow Implementation

Company: WorkFlowHub, a content marketing agency (6 people).

Challenge:

- No structured workflow

- Inconsistent quality

- Slow production (10 pieces/month)

- High turnover due to poor processes

Initial State (Q3 2025):

- Production: 10 pieces/month

- Time per piece: 5 hours

- Quality: Variable

- Results: 18% Page 1 rankings, 5 AI citations/month

Workflow Implementation (Q4 2025 - Q1 2026):

Phase 1: Workflow Design (Month 1):

- Designed human-AI collaboration workflow

- Created role responsibilities

- Built quality gates

- Developed templates and checklists

Phase 2: Training (Months 1-2):

- Trained team on RankDraft research

- Trained researchers on brief creation

- Trained writers on AI-assisted writing

- Trained editors on quality control

Phase 3: Implementation (Months 2-4):

- Implemented phased workflow

- Used quality gates consistently

- Tracked time and performance

- Iterated based on feedback

Optimized Workflow (Q1 2026):

- Phase 1: AI research (20 min) + Human review (10 min)

- Phase 2: AI brief (15 min) + Human refinement (15 min)

- Phase 3: AI first draft (30 min) + Human structure review (15 min)

- Phase 4: Human refinement (1.5 hours)

- Phase 5: Quality control (45 min)

- Total: 3 hours per piece

Results (Q1 2026):

Efficiency Metrics:

- Time per piece: 5 hours → 3 hours (-40%)

- Pieces per team: 10 → 28/month (+180%)

- Pieces per person: 1.7 → 4.7 (+176%)

- Workflow adherence: 40% → 92%

Quality Metrics:

- Page 1 rankings: 18% → 42% (+133%)

- AI citations: 5 → 34/month (+580%)

- First-approval rate: 45% → 88% (+96%)

- Editor revision time: 2 hours → 42 minutes (-65%)

Team Metrics:

- Team satisfaction: 2.8/5 → 4.5/5 (+61%)

- Turnover: 33% → 0%

- Training time per new hire: 2 weeks → 3 days (-79%)

ROI Metrics:

- Production ROI: 2.2x → 4.8x (+118%)

- Cost per piece: $425 → $182 (-57%)

- Client satisfaction: 3.5/5 → 4.8/5 (+37%)

Key Insights:

- Structured workflow pays: 180% more production, 40% less time

- Quality gates essential: First-approval rate doubled

- Human refinement non-negotiable: This phase drove 580% citation increase

- Team satisfaction matters: Turnover eliminated, satisfaction doubled

Conclusion

Human-AI collaboration workflows combine AI efficiency with human quality. The sweet spot: 40-60% AI (research, structure, foundation) + 40-60% human (insights, experience, voice).

Build workflows with:

- AI-first research (SERP analysis, competitor analysis)

- Collaborative briefing (AI structure + human unique angle)

- AI-first drafting (foundation from brief)

- Human refinement (critical phase)

- Collaborative quality control (AI checks + human fact-checking)

Teams with structured human-AI workflows produce 2.5x more content at 3.8x higher quality.

Document workflows. Train team. Track metrics. Iterate continuously.

Ready to build better human-AI workflows? Use RankDraft's collaboration features to coordinate research, writing, and optimization across your team.