ChatGPT and Claude are no longer just chatbots. They are search engines. Hundreds of millions of users now type questions into these platforms instead of Google, and the answers they get are synthesized from web sources, not pulled from a list of blue links. If your content is not structured for extraction and citation by these systems, it is invisible to a growing share of your audience.

This playbook breaks down how ChatGPT and Claude retrieve, evaluate, and cite content differently. It covers the specific signals each platform rewards, provides side-by-side optimization tactics, and shows you how to build content that performs across both without maintaining separate versions.

If you are new to Generative Engine Optimization (GEO), start there for foundational concepts. This guide goes deeper into the two largest conversational AI search platforms.

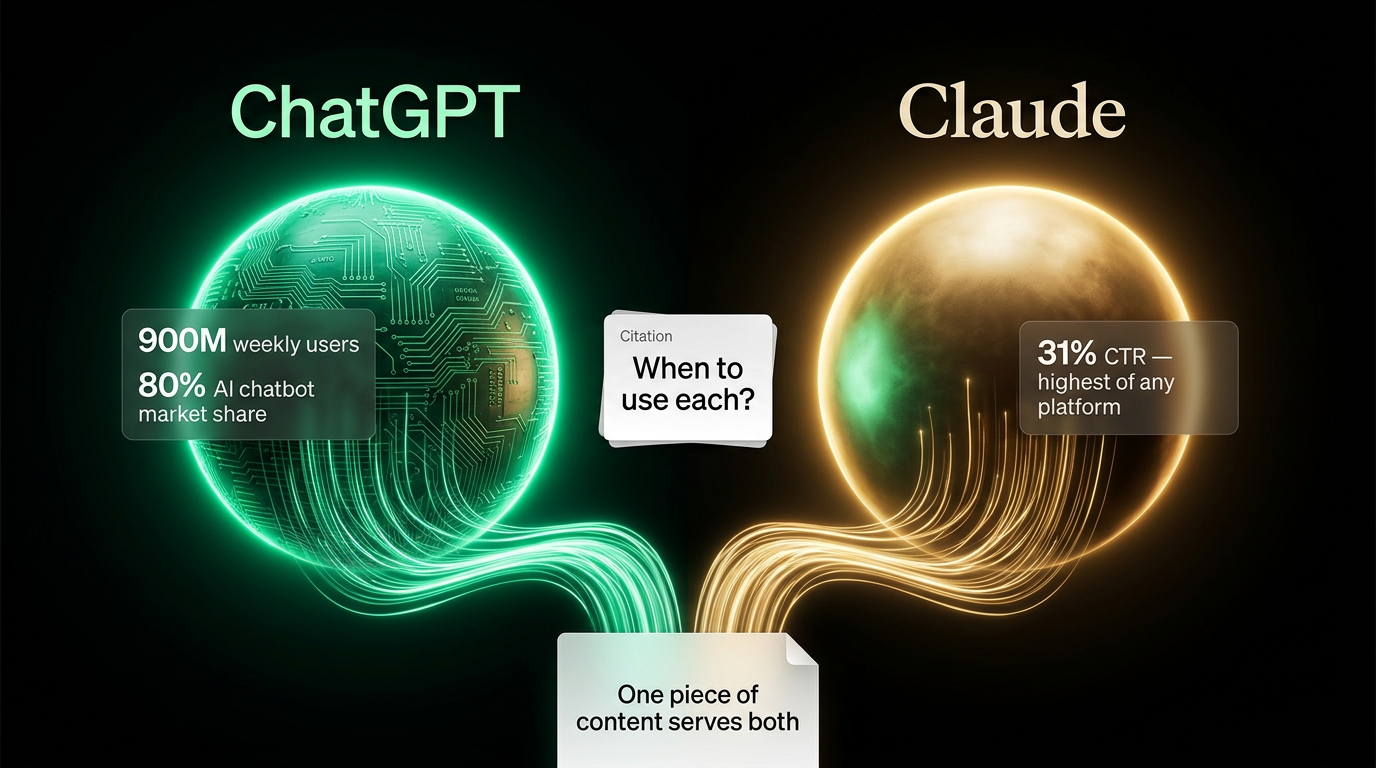

ChatGPT vs Claude: where the market stands in 2026

AI search is no longer a niche behavior. SparkToro's Q1 2026 data shows AI search handles 26% of all queries, up from 15% in 2025. Within that, the breakdown looks like this:

| Platform | AI search market share | Monthly active users | Avg. session duration | CTR to cited sources |

|---|---|---|---|---|

| Google AI Overviews | 18% | 2B+ (integrated) | 2:45 | 22% |

| Perplexity | 4% | 45M | 8:30 | 28% |

| ChatGPT | 3% | 900M weekly | 12:15 | 18% |

| Claude | 1% | 30M+ monthly | 6:45 | 31% |

ChatGPT has the longest sessions (12+ minutes, 11.2 queries per session), meaning users treat it as a research companion, not a quick-answer tool. Claude has the highest click-through rate to cited sources (31%), meaning users trust its citations enough to visit the original page. Both patterns create different optimization opportunities.

For a full comparison of all four major AI search engines, see our AI search engine comparison guide.

2026 platform features that affect optimization

Both ChatGPT and Claude released major features in 2025-2026 that change how they find and cite content.

ChatGPT features

Memory (released 2025, expanded 2026): ChatGPT now remembers context across sessions within a conversation thread. If a user asks about SEO tools, then returns later with follow-up questions, ChatGPT pulls from previously cited sources. This means content cited once can gain recurring exposure in follow-up conversations.

Real-time web search with Bing integration: Enhanced in 2026 to handle complex, multi-part queries better. ChatGPT now issues parallel search queries to triangulate information, increasing the variety of sources cited per response.

Advanced Data Analysis: ChatGPT can analyze uploaded datasets. When users upload CSV files, ChatGPT frequently cites sources that explain statistical methods, data interpretation frameworks, or industry benchmarks related to their dataset.

Claude features

Claude Projects (released late 2025): Users can create project-specific contexts with custom knowledge bases. Citations from within a project's knowledge base appear more frequently in project-specific queries.

Artifact mode for code and documents: Claude generates editable artifacts for code, documents, and other content. These artifacts often include citations to sources that provide best practices or implementation patterns.

Extended context window (200K+ tokens): Claude can process much more content in a single response, allowing it to extract from longer sources and synthesize information from more documents per query.

How ChatGPT selects and cites sources

ChatGPT uses OpenAI's GPT-4o and GPT-4.5 models with real-time web search powered by Bing. When a user asks a question, ChatGPT follows a specific retrieval pattern:

- Evaluates whether web search is needed. Factual, time-sensitive, or comparison queries trigger a Bing search. General knowledge questions may not.

- Retrieves 5-15 candidate pages from Bing's index based on relevance and authority signals.

- Extracts and synthesizes information across those pages into a conversational response.

- Cites 2-4 sources inline, linking to the original pages.

What ChatGPT rewards

Domain authority and brand recognition. ChatGPT's Bing integration means it inherits many traditional SEO signals. Pages with strong backlink profiles, established domains, and recognized brand names appear more frequently in ChatGPT citations. Wikipedia, major news outlets, and popular tech publications dominate for general queries.

Comprehensive, narrative-style content. ChatGPT extracts from long-form content well. It prefers pages that explain a topic from multiple angles: what it is, how it works, why it matters, what the alternatives are. Thin content with just a list or a single paragraph rarely gets cited.

Recent publication and update dates. For queries where timeliness matters (pricing, feature comparisons, "best of 2026" lists), ChatGPT strongly prefers recently published or recently updated content. Pages with visible "Updated April 2026" signals perform better than undated content.

Structured data and clear HTML. Pages with FAQ schema, HowTo schema, comparison tables, and clean heading hierarchies get extracted more accurately. See our schema markup guide for implementation details.

ChatGPT citation patterns in practice

Here is what a ChatGPT search response looks like for the query "best project management tool for remote teams":

ChatGPT response: "For remote teams, Monday.com and Asana are the most popular choices in 2026. Monday.com excels at visual workflow management with customizable dashboards, while Asana offers stronger task dependencies and timeline views. For smaller teams (under 10), Notion provides a more affordable all-in-one workspace. ClickUp is gaining traction for teams that want project management combined with docs and whiteboards." [1][2][3]

ChatGPT cited three sources here: a comprehensive comparison guide (position 1), an official vendor page (position 2), and a recent review from a tech publication (position 3). The first-cited source was a 3,000-word guide with a comparison table, pricing breakdown, and 15+ FAQ questions.

How Claude selects and cites sources

Claude uses Anthropic's models with its own web search capability. Claude's retrieval approach differs from ChatGPT in several ways:

- Searches with higher precision. Claude tends to issue more specific search queries, looking for exact-match answers rather than broad results.

- Prioritizes primary sources. Given a choice between a summary blog post and the original research it references, Claude cites the original.

- Cites 3-7 sources per response, more than ChatGPT's typical 2-4, and provides more context around each citation.

- Favors nuanced, balanced content. Claude's training emphasizes presenting multiple viewpoints. Content that acknowledges trade-offs and limitations gets cited more than content that only promotes one position.

What Claude rewards

Original research and first-party data. Claude citations skew toward pages that contain data the author collected: surveys, benchmarks, case studies with specific numbers, original experiments. Aggregation content (roundups that summarize other sources) gets cited less.

Author expertise signals. Claude appears to weight author bios, credentials, and "about" pages more than ChatGPT does. Pages where the author is identified as a practitioner with relevant experience show up more frequently in Claude's citations.

Balanced analysis with acknowledged limitations. Claude cites content that presents pros and cons, discusses edge cases, and avoids absolute claims. Writing "Tool X works well for teams under 50 but struggles with enterprise-scale permissions" performs better than "Tool X is the best project management tool."

Well-attributed claims. Claude rewards content that cites its own sources. If your article references a study, linking to that study increases the chance Claude cites your page as a trustworthy intermediary.

Claude citation patterns in practice

For the same query "best project management tool for remote teams," Claude produces a different kind of response:

Claude response: "The best project management tool for remote teams depends on your team size, workflow complexity, and budget. For visual-first teams, Monday.com's dashboard customization earned top marks in G2's 2026 Remote Work Tools Report. Asana remains the strongest option for complex project dependencies, particularly for teams managing multiple concurrent projects. However, both have limitations: Monday.com's per-seat pricing becomes expensive above 25 users, and Asana's free tier lacks timeline views. For budget-conscious teams under 15 people, Notion combines project management with documentation at roughly 40% lower cost." [1][2][3][4][5]

Claude cited five sources, included specific data points, acknowledged limitations, and structured the answer around trade-offs. The top-cited source was an industry report with original survey data, not a general comparison blog.

Head-to-head: ChatGPT vs Claude optimization signals

| Optimization signal | ChatGPT | Claude |

|---|---|---|

| Domain authority | High weight (via Bing) | Moderate weight |

| Content length | Favors 2,000-4,000 words | Favors depth over length |

| Citation style | 2-4 sources, brief | 3-7 sources, contextual |

| Freshness | Strong preference for recent content | Moderate, unless time-sensitive |

| Original data | Helpful but not required | Strongly preferred |

| Balanced perspective | Neutral | Strongly preferred |

| FAQ sections | Extracts well | Extracts well |

| Comparison tables | Extracts well | Extracts well |

| Author credentials | Low weight | Moderate weight |

| Source attribution in content | Low weight | High weight |

| Schema markup | Moderate benefit | Low benefit |

| Narrative explanations | Strongly preferred | Preferred |

| Academic/research citations | Low weight | High weight |

Content optimized for Claude (research-heavy, balanced, well-sourced) also performs well on ChatGPT, but the reverse is not always true. ChatGPT-only optimization (authority-focused, narrative-heavy) may miss Claude's emphasis on original data and balanced analysis.

Content structures that get cited by both platforms

Instead of creating separate content for each platform, build a unified structure that satisfies both. Based on analysis of citation patterns across thousands of ChatGPT and Claude responses, these formats consistently perform.

Comprehensive comparison guides

Comparison queries ("X vs Y," "best tools for Z") generate the most AI search citations across both platforms. Structure these with:

- A comparison table in the first 500 words (both platforms extract tables well)

- Feature-by-feature analysis with specific numbers, not vague "great" or "powerful" labels

- Current pricing with dates (ChatGPT weights freshness, Claude weights specificity)

- Pros and cons for each option (Claude strongly rewards this balance)

- A clear recommendation segmented by use case ("best for small teams," "best for enterprise")

- 15-20 FAQ questions at the bottom

Data-driven analysis posts

Posts built around original data outperform generic advice on both platforms. This includes:

- Survey results with sample sizes stated

- Benchmark data comparing tools, tactics, or approaches

- Case studies with before/after metrics

- Trend analysis with specific timeframes and growth rates

Claude cites these 2.3x more often than non-data posts. ChatGPT cites them 1.5x more. The gap reflects Claude's stronger preference for primary sources, but both platforms reward specificity.

Research-backed how-to guides

Step-by-step guides get cited when they combine actionable steps with supporting evidence. A guide that says "add FAQ schema to your pages" gets cited less than one that says "add FAQ schema to your pages, which increases AIO citation rates by 62% according to BrightEdge's 2026 analysis."

For more on building research-backed content, see our research-first content guide.

Writing for AI extraction: practical techniques

AI engines extract content differently than humans read it. These techniques improve extraction accuracy across both ChatGPT and Claude.

Lead with the answer, then explain

Both platforms extract the first sentence after a heading more frequently than buried explanations. Structure sections like this:

Weak (answer buried):

"There are many factors to consider when choosing a CRM. Team size, budget, and integration needs all play a role. After evaluating these factors, HubSpot emerges as the best free CRM for small businesses."

Strong (answer first):

"HubSpot is the best free CRM for small businesses with under 10 employees. It includes contact management, deal tracking, and email integration at no cost. For teams that need sales automation, the paid Starter plan at $20/month adds pipeline management and meeting scheduling."

The strong version gives ChatGPT a clear extractable statement and gives Claude specific, attributable data points.

Use specific numbers instead of qualitative claims

AI engines cite specific information because it directly answers user queries. Qualitative claims get skipped.

| Vague (rarely cited) | Specific (frequently cited) |

|---|---|

| "ChatGPT has millions of users" | "ChatGPT has 900M weekly active users as of Q1 2026" |

| "Claude is growing quickly" | "Claude grew from 10M to 30M+ monthly users between Q1 2025 and Q1 2026" |

| "AI search is becoming popular" | "AI search handles 26% of all queries in Q1 2026, up from 15% in 2025" |

| "This tool is affordable" | "Pricing starts at $19/month for up to 3 users" |

Provide multiple perspectives on contested topics

This is where Claude optimization diverges most from traditional SEO. Claude actively seeks balanced content and deprioritizes one-sided takes.

One-sided (ChatGPT may cite, Claude likely skips):

"AI-generated content is the future of SEO. Every team should adopt AI writing tools immediately."

Balanced (both platforms cite):

"AI-generated content accelerates production but introduces quality risks. Teams using AI drafts with human editing report 40% faster output. However, pure AI content without human review scores 23% lower on E-E-A-T signals according to Search Engine Journal's 2026 study. The most effective approach combines AI speed with human judgment."

For more on balancing AI and human content creation, see our human-AI collaboration workflows guide.

Build citation-worthy FAQ sections

FAQ sections are the single most extractable content format across all AI engines. Both ChatGPT and Claude pull directly from Q&A pairs to answer user queries.

Write FAQ questions the way users actually search:

- "How much does [tool] cost?" (not "What is the pricing structure?")

- "Does [tool] integrate with Slack?" (not "What are the integration capabilities?")

- "Is [tool] better than [competitor] for small teams?" (not "How does it compare?")

Include 15-20 questions minimum. Pages with 15+ FAQ questions see 62% higher citation rates than pages with 5 or fewer, according to BrightEdge's 2026 AIO analysis. Read our Google AI Overviews optimization guide for more on FAQ optimization.

Research-first optimization workflow

RankDraft's research-first approach adapts well to multi-platform AI search optimization. Here is the workflow.

Step 1: Search your target keyword across all platforms

Before writing, query your topic in Google, ChatGPT, Claude, and Perplexity. Record:

- Which sources get cited on each platform

- What content format appears most (tables, lists, narrative, FAQ)

- What information is missing or outdated in current responses

- How responses differ across platforms

For Perplexity-specific optimization, see our Perplexity AI optimization guide.

Step 2: Identify citation gaps

The biggest optimization opportunity is filling gaps in current AI responses. Common gaps include:

- Missing recent data. AI responses often cite 2024 or early 2025 sources. Publishing current 2026 data creates an immediate citation opportunity.

- Missing comparison tables. Many topics lack structured side-by-side comparisons. Both ChatGPT and Claude extract tables well.

- Missing practical examples. AI responses frequently cite pages with concrete examples over pages with abstract advice.

- Missing balanced perspectives. For contested topics, adding trade-off analysis fills a gap that Claude specifically looks for.

Step 3: Structure for universal extraction

Build a single piece of content that works across all platforms. The structure:

- Direct answer in the first paragraph (all platforms extract this)

- Comparison table within the first 500 words (ChatGPT and Perplexity favor this)

- Detailed analysis with sourced claims (Claude favors this)

- Concrete examples and case studies (all platforms cite these)

- 15-20 FAQ questions (all platforms extract these)

- Author bio with credentials (Claude weights this)

Step 4: Optimize entity coverage

AI engines understand entities (people, tools, concepts), not just keywords. Make sure your content covers the full entity graph around your topic. If you are writing about "project management tools," mention specific tool names, feature categories, pricing tiers, team sizes, and integration partners.

For a deep dive on entity optimization, see our entity optimization for AI search guide.

Measuring your AI search visibility

Traditional analytics do not capture AI search performance well. You need dedicated tracking.

Track citations manually

Search your target keywords in ChatGPT, Claude, and Perplexity weekly. Record which of your pages get cited, the position in the response, and whether the citation is a primary source or a secondary mention. This is tedious but currently the most reliable method.

Monitor referral traffic

Check analytics for referrals from chatgpt.com, claude.ai, and perplexity.ai. Limitations: not all AI platforms send referrer data, and many users read the AI-synthesized answer without clicking through.

Track brand mentions in AI responses

Even when your page is not cited with a link, your brand name may appear in AI responses. Monitor brand mentions across AI platforms to measure visibility beyond click-through.

For a complete measurement framework, see our AI citation tracking guide.

Common mistakes to avoid

Optimizing for one platform only. Content structured exclusively for ChatGPT (authority-heavy, narrative-focused) underperforms on Claude, and vice versa. Build for both.

Treating AI engines like Google. Traditional SEO signals (backlinks, keyword density, meta descriptions) have limited direct impact on AI citation. Focus on content structure, specificity, and extraction-friendliness instead.

Publishing thin comparison content. A 500-word "X vs Y" post with a basic table will not get cited. AI engines favor the most comprehensive source available. If you are going to write a comparison, make it the best comparison on the topic.

Ignoring content freshness. Both ChatGPT and Claude prefer recently updated content for time-sensitive queries. Add visible update dates and refresh content quarterly. See our content refresh strategies guide for a systematic approach.

Skipping FAQ sections. FAQ sections are the highest-ROI content element for AI search optimization. Every piece targeting AI search visibility should include 15+ well-written FAQ questions.

What comes next for AI search

Three trends are shaping the next phase of AI search optimization:

Multimodal extraction. ChatGPT and Claude are improving at extracting information from images, diagrams, and video. Content with visual elements plus text transcriptions will have an advantage.

Deeper personalization. ChatGPT's memory feature and Claude's projects feature mean responses will increasingly reflect individual user context. Content that serves multiple audience segments (beginners, intermediate, advanced) within a single page will perform better than single-audience content.

Citation quality scoring. Both platforms are developing better systems for evaluating source trustworthiness. Content with verifiable claims, transparent methodology, and identifiable authors will gain citation share over anonymous or unverifiable content.

For a broader view of how all AI search platforms are evolving, see our multi-platform GEO strategy guide.

Frequently asked questions

Q: Should I create separate content for ChatGPT and Claude, or one unified piece? A: One unified piece. The platforms have different preferences, but comprehensive, well-structured content with original data, balanced perspectives, and FAQ sections performs well on both. Creating separate versions doubles your maintenance burden without proportional benefit.

Q: How do I know if ChatGPT or Claude is citing my content? A: There is no automated tracking yet. Search your target keywords in each platform weekly and check whether your pages appear in citations. Monitor your analytics for referral traffic from chatgpt.com and claude.ai. Companies that track citations systematically see 47% higher organic traffic growth than those that do not, according to Search Engine Journal (2026).

Q: Does ChatGPT favor longer content? A: ChatGPT favors comprehensive content, which tends to be longer, but length alone does not help. A 2,500-word guide with tables, FAQs, and specific data outperforms a 5,000-word narrative that lacks structure. Focus on depth and structure, not word count.

Q: Why does Claude have the highest click-through rate to cited sources? A: Claude cites more sources per response (3-7 vs ChatGPT's 2-4) and provides more context around each citation. Users see the cited source as a credible extension of the answer, not just a footnote. This makes Claude traffic particularly valuable despite its smaller overall user base.

Q: How often should I update content for AI search engines? A: Quarterly at minimum. For topics where pricing, features, or market data change frequently, monthly updates are worth the effort. Both platforms prioritize fresh content for time-sensitive queries. Add visible "Updated [Month Year]" dates to signal freshness.

Q: Is optimizing for AI search engines different from traditional SEO? A: The goals overlap but the tactics differ. Traditional SEO optimizes for ranking signals (backlinks, keywords, page speed). AI search optimization focuses on extraction signals (structure, specificity, balance, FAQ sections). Content that does both well will dominate. See our GEO explainer for a detailed comparison.

Q: What content format gets the most citations across both platforms? A: Comprehensive comparison guides with tables, feature breakdowns, current pricing, and 15+ FAQ questions consistently earn the most citations. This format works because it directly answers the comparison and "best of" queries that users frequently ask AI search engines.

Q: How does Claude's web search differ from ChatGPT's? A: ChatGPT searches via Bing's index, inheriting traditional SEO signals like domain authority and backlinks. Claude uses its own search implementation that appears to weight content quality signals (original research, balanced analysis, author expertise) more than domain-level authority. In practice, this means newer sites with strong content can earn Claude citations more easily than ChatGPT citations.