Zapier generates 16 million organic visitors per month from over 70,000 programmatic pages. Canva drives 100 million monthly visits through 190,000 indexed template pages. Wise attracts 60 million organic visitors from 8.5 million currency converter pages. These are not template spam farms. They are programmatic SEO v2 engines that combine automation with genuine information gain.

Traditional programmatic SEO failed because it produced low-quality, thin content that search engines learned to penalize. Programmatic SEO v2 takes a fundamentally different approach: research-first methodology applied at scale, with human oversight as a non-negotiable quality gate. The result is content that ranks in Google, earns citations from AI search engines like Perplexity and ChatGPT, and actually converts visitors.

This guide covers the complete framework for building programmatic SEO v2 engines in 2026, with concrete examples, data, and implementation steps.

Why Programmatic SEO v1 Died

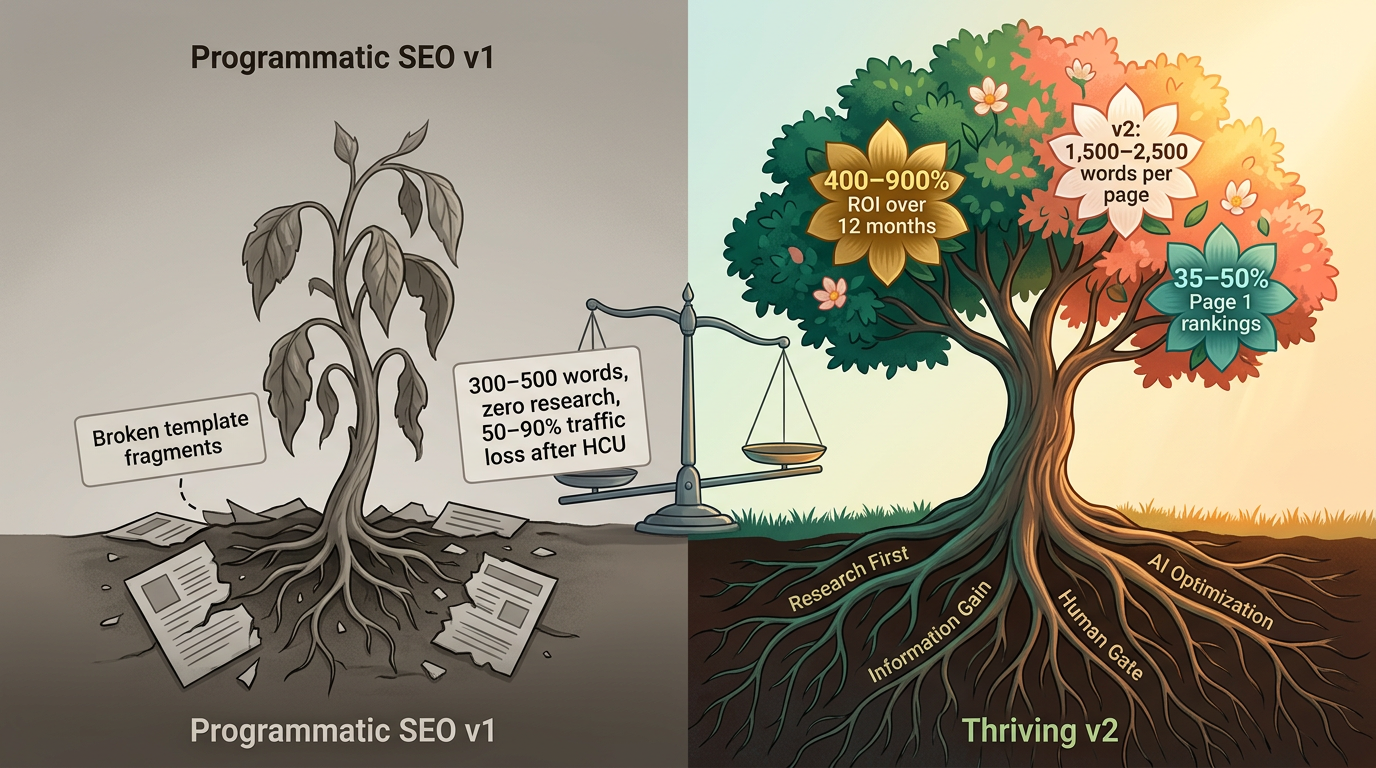

Google's Helpful Content system (now baked into the core algorithm since March 2024) and its "Scaled Content Abuse" policy were the final nails. Sites that generated thousands of thin pages from basic templates lost 50-90% of their traffic. The August 2025 Spam Update specifically targeted programmatic SEO and mass-generated content, and of 3,000+ penalized sites tracked from September 2023 through June 2025, only a minority achieved full recovery.

What v1 Looked Like

- Template pages with variable insertion ("Best [Product] in [City]")

- Thin content (300-500 words per page)

- No research, no original data, no expertise

- Identical structure across thousands of pages

- Zero optimization for AI search engines

Why It Failed

Google's internal system (codenamed "Firefly") specifically detects scaled content abuse patterns. The key distinction Google draws: automation is not the problem. The lack of genuine user value is. Every page must pass what Google calls the "information gain" test: does this page add new information to the search results that was not already available?

The consequences are severe. Ranking demotions, complete removal from search results, and recovery timelines of 3-12 months spanning multiple core update cycles. For a deeper look at what Google actually rewards, see our Human-First SEO Guide.

Programmatic SEO v2: What Changed

Programmatic SEO v2 is not just "better templates." It is a fundamentally different approach built on four pillars:

1. Research-first methodology. Every template category gets multi-platform SERP research across Google, Perplexity, ChatGPT, and Claude before a single page is generated. This ensures each page addresses real user questions and gaps in existing content.

2. Information gain per page. Each page must contribute something beyond what is already available: original data, proprietary analysis, expert review, or a clearer framework. The litmus test: "If I removed the variable (city name, product name) from this page, would the remaining content still be useful?" If the answer is no, the page needs more depth.

3. AI search optimization. With AI Overviews appearing in 25.8% of all US searches (January 2026) and Perplexity processing 1.2-1.5 billion queries per month, programmatic pages must be structured for AI extraction. Comparison tables, FAQ blocks, and structured data are not optional.

4. Human quality gate. 30-40% of the effort is human review, enhancement, and QA. AI accelerates production. Humans ensure accuracy, add expertise, and catch patterns that fail quality checks.

v1 vs. v2 Comparison

| Dimension | Programmatic SEO v1 | Programmatic SEO v2 |

|---|---|---|

| Content depth | 300-500 words | 1,500-2,500+ words |

| Research | None | Multi-platform SERP analysis |

| Data sources | Single database | Multiple APIs + proprietary data |

| Human involvement | 0-5% | 30-40% |

| AI search optimization | None | Tables, FAQs, schema markup |

| Update frequency | Never | Monthly data, quarterly content |

| Information gain | Zero (duplicate structure) | Unique data per page |

| Typical ROI | Negative (penalties) | 400-900% over 12 months |

The Programmatic SEO v2 Framework

Phase 1: Opportunity Identification

Not every topic is suited for programmatic SEO. The best opportunities share four characteristics:

High keyword volume with consistent intent. You need hundreds or thousands of long-tail keywords that follow a repeatable pattern. "Best [software] for [industry]" works because there are 50+ industries with consistent comparison intent. "How to deal with [emotion]" does not work because each variation requires completely different expertise.

Structured data availability. You need a reliable data source: product catalogs with pricing and features, location databases with demographics, industry databases with categories and use cases. Without structured data, you are generating content from thin air.

Manageable competition. Check whether existing results for your keyword patterns are dominated by high-authority sites or thin content. If the SERPs are full of thin pages, that is a programmatic SEO v2 opportunity. If established authorities already cover each variation deeply, consider a different angle.

Business value. Each page should target keywords with commercial or informational intent that maps to your funnel. Use content briefs to validate that each template category has clear conversion potential.

Proven Use Cases:

| Pattern | Example | Typical Volume | Data Source |

|---|---|---|---|

| Product + Industry | "CRM software for healthcare" | 50-200 pages | Product DB + industry DB |

| Service + Location | "IT support in [City]" | 50-500 pages | Service catalog + location DB |

| Product + Feature | "CRM with AI forecasting" | 20-50 pages | Feature matrix |

| Product + Price | "CRM under $50/user/month" | 10-20 pages | Pricing database |

| Template + Niche | "Wedding invitation templates" | 100-1,000 pages | Template library |

| Comparison | "[Product A] vs [Product B]" | 50-200 pages | Product pairs matrix |

Canva executes the "Template + Niche" pattern at massive scale, with 190,000+ indexed pages covering every variation from "Minimalist Wedding Invitations" to "Professional Marketing Pitch Decks." Each page includes actual templates you can use, making the content genuinely useful rather than keyword-stuffed filler.

Phase 2: Template Architecture

Your template is the backbone of every page. A weak template produces thousands of weak pages. A strong template produces thousands of strong pages. Invest heavily here.

Core Template Structure:

H1: [Product Type] for [Target Audience]

H2: What Is [Product Type]?

(3-4 paragraphs: definition, relevance to target audience, market context)

H2: Why [Target Audience] Needs [Product Type]

(Pain points, benefits, ROI justification with data)

H2: Top [Number] [Product Type] for [Target Audience]

(Comparison table: name, price, key features, rating)

(This table is critical for Perplexity extraction)

H2: Detailed Reviews

H3: [Product 1] (500-700 words with pros/cons/pricing/verdict)

H3: [Product 2]

H3: [Product 3]

H2: Key Features to Evaluate

(5-7 features with explanations specific to target audience)

H2: Pricing Guide

(Price ranges, budget tiers, hidden costs, ROI calculation)

H2: How to Choose [Product Type] for [Target Audience]

(Decision framework, evaluation criteria, common mistakes)

H2: FAQ

(15-25 questions, structured for FAQPage schema)

H2: Methodology

(How you researched, what data sources, evaluation criteria)

Template Quality Standards:

- Minimum 1,500-2,500 words per page

- At least one comparison table (Perplexity cites tabular content 2.5x more than text-only)

- FAQ section with 15-25 questions for FAQPage schema

- Methodology section that establishes credibility

- Platform-specific elements: structured data, internal links, clear heading hierarchy

The Uniqueness Requirement: Every section in your template must have slots for variable, data-driven content that changes meaningfully between pages. A "Why [Industry] Needs CRM" section should reference industry-specific pain points, regulations, and workflows, not generic benefits with the industry name swapped in.

Phase 3: Research Integration

This is where programmatic SEO v2 diverges most sharply from v1. Research is not optional. It is the phase that determines whether your pages provide information gain or just noise.

Per-Category Research:

- Multi-platform SERP analysis (Google, Perplexity, ChatGPT, Claude) for each keyword cluster

- Top products or services identified with current pricing and features

- Common user questions and concerns extracted from forums, reviews, and "People Also Ask"

- Content gaps: what existing results miss that your pages can cover

- Platform-specific optimization signals (what format earns AI citations)

Research Automation at Scale:

- Use APIs (RankDraft, Ahrefs, SEMrush) for keyword and SERP data at scale

- Scrape product databases for pricing, features, and ratings

- Extract FAQ data from "People Also Ask" and forum discussions

- Generate per-category research summaries that feed into content generation

The research phase is what lets you pass Google's information gain test. Wise (formerly TransferWise) built an entire custom CMS called "Lienzo" specifically to integrate real-time exchange rate data, bank fee structures, and transfer speed comparisons into their 8.5 million currency pages. Each page is backed by data that makes it genuinely more useful than any competitor.

For a deeper dive on structuring research workflows, see our content operations framework guide.

Phase 4: Data Pipeline

Your data pipeline determines the accuracy and freshness of every page. Bad data at scale means thousands of pages with wrong information.

Data Sources:

- Product databases (pricing, features, ratings, screenshots)

- Location databases (demographics, economic data, local providers)

- Industry databases (categories, regulations, compliance requirements, market size)

- User feedback databases (reviews, ratings, common complaints)

- Real-time APIs (exchange rates, stock prices, weather data)

Pipeline Architecture:

Source Systems → Extract → Transform → Validate → Load → Generate

↓

Flag Missing Data

Verify Pricing Accuracy

Check for Staleness

Ensure Consistency

Data Validation Rules:

- Pricing data must be verified against source within the last 30 days

- Feature lists must match the vendor's current product page

- Ratings must come from verifiable third-party sources

- Any data point older than 90 days gets flagged for manual review

- Missing data fields trigger page exclusion (do not publish incomplete pages)

Zillow demonstrates what a mature data pipeline looks like at extreme scale. Their programmatic pages pull from property value estimates, price trend data, school ratings, walkability scores, and local market statistics. One sitemap index for off-market homes alone contains roughly 100 million URLs, each backed by structured data that makes the page genuinely informative.

Phase 5: Content Generation

With research and data in place, generation follows a four-step process.

Step 1: Template Application Apply the template to each keyword variation. Insert variable data from your pipeline. Generate the base content structure.

Step 2: AI Enhancement Use AI (Claude, GPT-4) to expand template sections with keyword-specific insights. This is where each page gains depth: industry-specific use cases, feature analysis, comparison context. The AI draws on your research data, not generic training knowledge. For guidance on effective human-AI collaboration workflows, see our dedicated guide.

Step 3: Human Review Review every page (or a statistically significant sample at very high volumes) for:

- Factual accuracy of data points, pricing, and features

- Platform optimization (tables render correctly, schema validates)

- AI writing patterns (remove hollow intensifiers, generic phrasing, puffery)

- Genuine information gain (does this page add value beyond variable swapping?)

- Brand voice consistency

Step 4: Technical QA

- Schema markup validation (FAQPage, Product, Organization)

- Internal linking verification

- Core Web Vitals check (programmatic pages must load fast at scale)

- Mobile rendering validation

- URL structure and canonical tag verification

Automation Split:

- 60-70% automated (template application, data insertion, AI expansion)

- 30-40% human (research validation, review, enhancement, QA)

This split is not a target to optimize away. The human component is what separates programmatic SEO v2 from the template spam that gets penalized. Sites implementing quality checklists at this stage see measurably better indexing rates and citation frequency.

Phase 6: Schema Markup for AI Visibility

Schema markup is how AI search engines understand what makes each programmatic page unique. When you have 10,000 city pages, the schema's about, areaServed, and offers properties tell AI systems that this specific page is about IT support in Austin, not a generic template.

High-Impact Schema Types:

| Schema Type | Use Case | AI Impact |

|---|---|---|

| FAQPage | FAQ sections | Directly maps to AI Q&A extraction |

| Product | Product review pages | Enables price/feature extraction |

| HowTo | Step-by-step guides | AI can decompose and cite steps |

| Article/BlogPosting | Content pages | Establishes authorship and freshness |

| Organization (with knowsAbout) | Site-wide | Declares topical expertise areas |

| LocalBusiness | Location pages | Enables local AI answer matching |

The data supports this investment. Sites with proper schema markup have a 2.5x higher chance of appearing in AI-generated answers. 89% of Google-cited pages have schema markup. Content with structured data and FAQ blocks saw a 44% increase in AI search citations (BrightEdge, 2025).

For implementation details, see our complete schema markup guide for AI Overviews.

Phase 7: Launch and Scale

Staged Rollout:

| Stage | Pages | Duration | Goal |

|---|---|---|---|

| Pilot | 50-100 pages | 2 weeks | Validate template and data pipeline |

| Test batch | 100-500 pages | 30 days | Measure indexing, traffic, citations |

| Scale | 500-2,000 pages | 60 days | Optimize based on test batch data |

| Full volume | 2,000-10,000+ pages | Ongoing | Continuous production and updates |

Key Metrics to Track:

- Indexing rate: Target 80-90%. If below 70%, your pages lack sufficient uniqueness.

- Traffic per page: 50-500 visits/month per page is typical for well-executed programmatic SEO v2.

- AI citation frequency: Track citations across Google AI Overviews, Perplexity, and ChatGPT Search. Use our AI citation tracking guide for methodology.

- Conversion rate: 0.5-3% per page, depending on intent match and CTA placement.

- Content decay signals: Monitor for traffic drops that indicate content staleness.

Ongoing Optimization:

- Update pricing and feature data monthly

- Refresh content quarterly with new research

- Prune underperforming pages that drag down site quality (content pruning strategies)

- A/B test template variations on new batches

- Monitor Google Search Console for indexing issues and coverage warnings

Real-World Examples: Programmatic SEO v2 in Action

Zapier: Integration Landing Pages

Zapier built 70,000+ programmatic pages targeting specific app integration queries like "Send Slack messages from Gmail" and "Add Trello cards from Google Sheets." Each page is backed by real integration data: which apps connect, what triggers and actions are available, setup steps, and user reviews. The result: 16 million+ organic visitors per month.

Why it works: Each page delivers unique, verifiable data that serves the searcher's specific intent. You cannot get "how to connect Slack to Gmail via Zapier" from a generic automation page.

Wise: Currency and Banking Pages

Wise generates 60 million+ monthly organic visitors from 8.5 million currency converter pages. They built a custom CMS ("Lienzo") specifically for programmatic SEO, pulling live exchange rate data, bank fee structures, transfer speed benchmarks, and SWIFT code information into each page.

Why it works: Real-time data that changes daily. Every page has information you cannot find anywhere else aggregated in that way. Wise produces approximately 300 articles per quarter across multiple languages, each backed by proprietary data.

Nomadlist: City Cost-of-Living Pages

Nomadlist generates 50,000 monthly organic visitors from 24,000+ indexed pages covering city-by-city cost of living, demographics, weather, internet speed, safety ratings, and quality of life scores for digital nomads.

Why it works: Proprietary data from user surveys and local research. Each city page has genuinely different, verified information that templates alone could never produce.

What These Examples Share

Every successful programmatic SEO v2 implementation has three things in common:

- Proprietary or aggregated data that makes each page uniquely valuable

- Genuine utility beyond keyword targeting (users would bookmark these pages)

- Continuous freshness through automated data pipelines and regular updates

Common Mistakes and How to Avoid Them

1. Thin Content with Variable Swapping

The mistake: Generating 300-500 word pages where only the city or product name changes. Google's Firefly system detects this pattern reliably.

The fix: Minimum 1,500-2,500 words with multiple sections that contain genuinely variable content. Each page should pass the "remove the variable" test.

2. Skipping Multi-Platform Research

The mistake: Generating pages without analyzing what Google, Perplexity, and ChatGPT already surface for each keyword category.

The fix: Research every template category across platforms. Identify what existing content covers, what it misses, and where your pages can provide information gain. See our guide on research-first content methodology.

3. Publishing Without Human Review

The mistake: Letting the pipeline run from data to published page without human oversight.

The fix: Human review is non-negotiable. At minimum, review a statistically significant sample of each batch. At best, review every page. The 30-40% human effort is what prevents penalties and ensures genuine quality.

4. Stale Data

The mistake: Generating pages once and never updating them. Pricing changes, products launch and shut down, features evolve.

The fix: Automated data refresh pipelines. Pricing updates monthly. Content refresh quarterly. Perplexity specifically favors fresh content: data less than 3 months old accounts for 54% of its citations.

5. Ignoring AI Search Optimization

The mistake: Optimizing only for Google while ignoring that Perplexity now has 45 million monthly active users and AI Overviews appear in 25.8% of searches.

The fix: Include comparison tables (cited 2.5x more by Perplexity), FAQ sections with schema markup, and structured data on every page. For a complete breakdown of AI search engine differences, see our comparison guide.

6. Rigid Templates

The mistake: One template structure applied identically to every variation, even when some categories need different treatment.

The fix: Build flexible templates with conditional sections. A "CRM for healthcare" page needs a compliance/HIPAA section that "CRM for real estate" does not. Template logic should adapt to category requirements.

Programmatic SEO v2 ROI

Production Economics

| Metric | Manual Content | Programmatic SEO v2 |

|---|---|---|

| Output | 10-50 pieces/month | 500-5,000 pages/month |

| Cost per piece | $500-2,000 | $50-200 |

| Time per piece | 20-40 hours | 1-3 hours (automated + human) |

| Research depth | Deep (per piece) | Deep (per category, applied to many) |

| Update cost | Same as creation | 10-20% of creation cost |

Efficiency gain: 10-100x more content at 80-90% lower cost per piece and 10-20x faster production.

Performance Benchmarks

Based on aggregated data from successful programmatic SEO v2 implementations:

- Indexing rate: 80-90% for quality pages (vs. 30-50% for v1 thin content)

- Traffic per page: 50-500 visits/month (varies by niche competition)

- AI citations: 10-30 citations per month per 100 pages

- Conversion rate: 0.5-3% (30-50% higher than generic blog posts due to specific intent matching)

- Average ROI: 748% ($7.48 return for every $1 invested)

- B2B SaaS breakeven: Approximately 7 months

ROI Calculation Example

| Item | Value |

|---|---|

| Investment: 1,000 pages | $50,000-200,000 |

| Monthly traffic (at 200 visits/page avg) | 200,000 visits |

| Conversion rate | 1.5% |

| Monthly leads | 3,000 |

| Cost per lead (SEO) | ~$31 |

| Comparable PPC cost per lead | ~$181 |

| Annual PPC equivalent value | $6.5M+ |

| 12-month ROI | 400-900% |

For detailed ROI measurement methodology, see our ROI measurement guide for AI-optimized content.

Case Study: B2B SaaS Industry Guide Scaling

Challenge: A B2B SaaS company needed to capture long-tail traffic across 150+ industries but manual content creation was producing only 50 guides over 6 months at $1,500 per guide.

Programmatic SEO v2 Implementation:

1. Opportunity Validation: Identified 150+ long-tail keywords following the "[Software Category] for [Industry]" pattern. Validated consistent comparison/informational intent. Competition analysis showed most existing results were thin, outdated listicles.

2. Template Development: Created a 2,000+ word template with comparison tables optimized for Perplexity extraction, 20+ FAQ section with FAQPage schema, industry-specific compliance sections (conditional), and a methodology disclosure section.

3. Research Integration: Multi-platform SERP research for each industry vertical. Identified top 5-8 products per industry with current pricing, features, and user ratings. Extracted industry-specific pain points, regulations, and workflows from forums and trade publications.

4. Data Pipeline: Integrated product database (pricing updated via API monthly), industry database (regulations, market size, growth rates), and user review aggregation (G2, Capterra ratings). Automated validation flagged 12% of pages for manual data correction before launch.

5. Generation and Review: AI expanded template sections with industry-specific insights. Human reviewers checked every page for accuracy, added expert commentary, and verified data points. Technical QA validated schema markup and internal linking.

Results After 6 Months:

| Metric | Before | After | Change |

|---|---|---|---|

| Published pages | 50 | 150 | 3x increase |

| Monthly organic traffic | 5,000 | 28,000 | 5.6x increase |

| AI citations (monthly) | 0 | 52 | From zero |

| Cost per page | $1,500 | $180 | 88% reduction |

| Time to full production | 6 months | 5 weeks | 5x faster |

| Average conversion rate | 1.5% | 2.7% | 80% improvement |

| Monthly leads | 75 | 756 | 10x increase |

Key insight: The pages that performed best were not the ones with the most content. They were the ones with the most unique, industry-specific data points: compliance requirements, typical contract values, integration needs, and buyer personas that generic "best CRM" pages never cover.

The Future of Programmatic SEO (2026 and Beyond)

The landscape is shifting fast. Here are the trends shaping programmatic SEO v2 over the next 12-18 months.

RAG-Powered Content Generation

The shift from "variable swapping" to "knowledge integration" is accelerating. Retrieval-Augmented Generation (RAG) pipelines upload internal documents, research data, and proprietary databases, then force AI to write using derived knowledge rather than generic training data. Each page demonstrates genuine E-E-A-T because the content is grounded in real, verifiable information.

AI Research Agents

AI agents that conduct multi-platform research autonomously are becoming production-ready. Instead of manually researching each keyword category, agents can analyze SERPs, extract competitor data, identify content gaps, and generate research briefs at scale. This compresses the research phase from weeks to hours.

Predictive Page Performance

Machine learning models trained on your existing programmatic pages can predict which new pages will perform before you publish them. This lets you prioritize high-potential pages and skip categories where the data or competition suggests poor returns.

Real-Time Data Integration

Live data feeds from APIs (pricing, inventory, exchange rates, market data) enable pages that update continuously rather than on monthly cycles. This is already standard for financial content (Wise) and is expanding to product comparisons, job listings, and local services.

Multi-Format Content at Scale

Programmatic SEO v2 is expanding beyond text. Auto-generated comparison charts, data visualizations, and short-form video summaries increase engagement and citation probability. Multi-modal content integration correlates with a 156% higher AI Overview selection rate.

Getting Started: Your First 30 Days

Week 1: Validate the Opportunity

- Identify 3-5 keyword patterns with 100+ variations each

- Analyze SERP competition for a sample of 20 keywords per pattern

- Confirm structured data sources exist for each pattern

- Calculate potential traffic and conversion value

Week 2: Build the Template

- Design template architecture with 8-12 sections

- Define variable data points per section

- Create conditional logic for category-specific sections

- Write the "fixed" copy that appears on every page

Week 3: Set Up the Pipeline

- Connect data sources and validate data quality

- Build the research workflow (manual or automated)

- Configure schema markup templates

- Set up QA checklists

Week 4: Pilot Launch

- Generate 50-100 pilot pages

- Human review every page

- Publish and submit to Google Search Console

- Set up monitoring for indexing, traffic, and citations

For teams scaling content operations beyond programmatic SEO, our content velocity strategies guide covers how to increase output across all content types without sacrificing quality. If you are building the team to support this, see our guide on content team structure for 2026.

Conclusion

Programmatic SEO v2 works because it combines the scale of automation with the quality of research-first methodology. The companies winning with this approach (Zapier, Canva, Wise, Zillow, Nomadlist) all share the same formula: proprietary data, genuine utility per page, structured content for AI extraction, and continuous freshness.

Build quality templates with real depth. Invest in research before you generate a single page. Set up data pipelines that keep information accurate. Make human review non-negotiable. Launch with a small pilot, measure rigorously, and scale based on results.

The opportunity is significant. With AI search engines processing billions of queries per month and organic CTR dropping 61% on queries where AI Overviews appear, the sites that structure content for both traditional and AI search will capture disproportionate value.

Frequently Asked Questions

Q: Is programmatic SEO v2 penalized by Google? A: No. Google penalizes low-quality, thin content, not the programmatic approach itself. Their "Scaled Content Abuse" policy targets pages created primarily to manipulate rankings. Programmatic SEO v2 pages with genuine depth (1,500-2,500+ words), unique data per page, and human review consistently perform well. Zapier, Canva, and Wise are proof that programmatic content at massive scale can thrive.

Q: How much human oversight does programmatic SEO v2 require? A: Plan for 30-40% human effort across the workflow. Research design, template architecture, data validation, content review, and QA all require human judgment. The 60-70% that is automated covers template application, data insertion, AI content expansion, and schema markup generation. Do not try to reduce the human percentage below 30%.

Q: How many pages should I start with? A: Start with a pilot batch of 50-100 pages. Monitor indexing rate, traffic per page, and citation frequency for 30 days. If indexing exceeds 80% and traffic meets expectations, scale to 500 pages. Then iterate to full volume (2,000-10,000+ pages) based on performance data.

Q: How do I keep programmatic content fresh? A: Automate data refresh pipelines for pricing and feature data (monthly minimum). Refresh content quarterly with new research and updated analysis. Perplexity specifically favors recency: content less than 3 months old accounts for 54% of its citations. Set up content decay detection to catch pages losing traffic before they become stale.

Q: What topics work best for programmatic SEO v2? A: Topics with structured data and consistent search intent across many variations: product comparisons by industry, location-based service pages, feature-specific product pages, price range comparisons, and template/tool libraries. It does not work well for unique, story-driven content, thought leadership, or topics where each variation requires fundamentally different expertise.

Q: What is the expected ROI of programmatic SEO v2? A: Average ROI is 748% based on aggregated data from successful implementations. B2B SaaS companies typically break even at 7 months. The economics work because research costs are amortized across hundreds of pages per category, and SEO leads cost approximately $31 each compared to $181 for PPC.

Q: How do AI search engines handle programmatic pages? A: AI search engines (Perplexity, ChatGPT Search, Google AI Overviews) cite programmatic pages when they provide structured, extractable information. Comparison tables are cited 2.5x more than text-only content. Pages with schema markup have a 2.5x higher chance of appearing in AI answers. The key is structuring content for extraction, not just readability. See our Perplexity optimization guide and AI search engine comparison for platform-specific strategies.

Q: Should I use ChatGPT or Claude for content generation? A: Use both at different stages. Claude excels at research synthesis and nuanced analysis. GPT-4 is strong at structured content expansion and maintaining template consistency. The choice matters less than the quality of your research data and human review process. Neither tool compensates for thin research or missing data.

Q: How does programmatic SEO v2 relate to topical authority? A: Programmatic SEO v2 is one of the most effective ways to build topical authority at scale. Publishing 200 comprehensive pages about "CRM software for [industry]" signals deep expertise in CRM software to both Google and AI search engines. The key is ensuring each page genuinely demonstrates expertise rather than just keyword coverage.

Q: What schema markup should programmatic pages use?

A: At minimum: FAQPage schema for FAQ sections, Product schema for product review pages, and Article/BlogPosting for content metadata. Add Organization schema with knowsAbout at the site level to declare topical expertise. For location pages, include LocalBusiness or areaServed properties. Sites with complete schema see up to 40% more AI Overview appearances. See our schema markup guide for implementation details.