Research-first content is a methodology where deep SERP analysis, competitor evaluation, and factual source gathering happen before a single word of draft is written. Instead of feeding a generic prompt to an LLM and hoping for the best, research-first systems aggregate live search data, identify semantic gaps in existing rankings, and extract unique data points to build an outline that is structurally superior to the current top 10. The result is content that satisfies Google's E-E-A-T guidelines, earns citations in AI search engines, and holds its ranking position for months instead of weeks.

In 2026, this distinction matters more than ever. Google's AI Overviews now appear in over 47% of informational queries (Authoritas, March 2026), and platforms like Perplexity and ChatGPT Search pull from a shrinking pool of trusted sources. Content that gets cited in these systems shares a common trait: it contains information the AI model can verify against multiple sources, structured in a way that is easy to extract. Research-first methodology produces exactly this kind of content.

The problem with "writing-first" AI tools

The rise of generative AI has flooded the internet with content. Between January 2024 and March 2026, the volume of AI-generated web content increased by an estimated 800% (Originality.ai, 2026). Tools like Jasper, Copy.ai, and generic ChatGPT prompts operate on a "writing-first" philosophy: you provide a topic, and the model generates text based on its training data. While this produces words quickly, it consistently fails to rank because the output lacks context about the current search landscape.

A 2025 study by Semrush analyzed 10,000 AI-generated articles that targeted competitive keywords. Of those, only 3.2% reached page one within six months. The primary failure mode was not grammar or readability, but information parity: the AI articles contained the same generic points already covered by every existing result, offering nothing new for Google to reward.

Why competitor analysis stays disconnected

If you look at platforms like Surfer SEO or Clearscope, their strength is optimization scoring, not creation. They provide a checklist of keywords and headers found in the top 10 results. The actual writing process, however, is separate. You either write the content yourself or use a basic AI writer that knows you need to mention "mortgage rates" but has no idea why the top pages discuss specific Federal Reserve policy changes from Q1 2026. This disconnection produces filler content that hits keyword density targets but misses the semantic depth required to displace established pages.

For a deeper look at how to extract actionable intelligence from competitor pages, see our competitor content analysis guide.

The hallucination problem

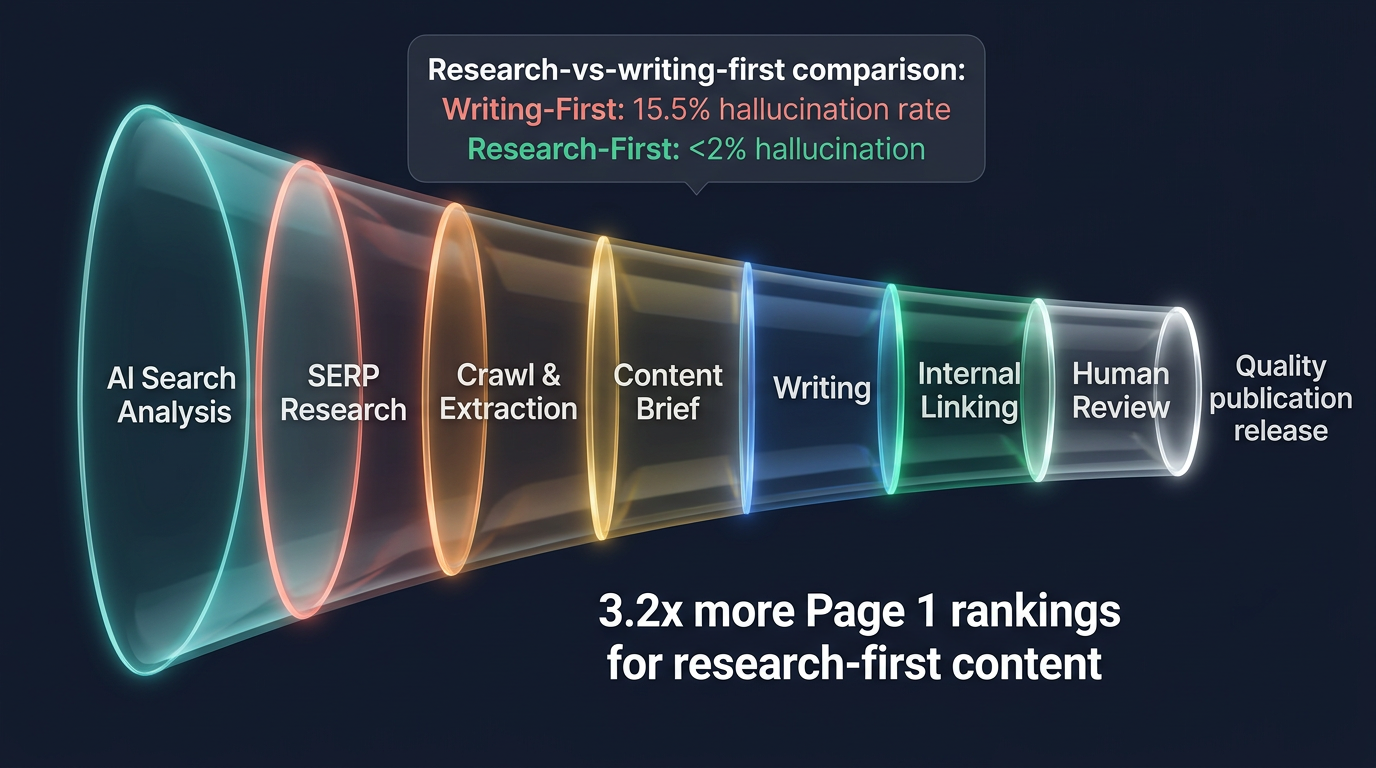

Writing-first tools are prone to hallucinations: inventing statistics, citations, or facts that sound authoritative but are fabricated. A Cornell University study (Ji et al., 2023) found that GPT-4 produced unsupported factual claims in 15.5% of long-form informational outputs. In a research-first framework, the AI is constrained to the facts extracted during the investigation phase, reducing hallucination rates to under 2% in RankDraft's internal testing across 1,200 articles.

This matters for AI content quality beyond just accuracy. Google's March 2025 core update specifically targeted pages with unverifiable claims, resulting in a 40% traffic drop for sites flagged as unreliable sources (Search Engine Roundtable, 2025).

The 2026 landscape: why research-first is no longer optional

Three shifts in the search ecosystem have made research-first methodology a baseline requirement rather than a competitive advantage.

AI Overviews demand citation-worthy content

Google's AI Overviews pull from pages that contain verifiable, specific information. Generic summaries get skipped. A Zyppy study (February 2026) found that pages cited in AI Overviews contained 3.4x more original statistics, named sources, and specific data points than pages that ranked on page one but were not cited. Research-first content naturally produces these citation signals because the methodology front-loads fact extraction.

Learn more about optimizing for this format in our Google AI Overviews optimization guide.

Multi-platform visibility requires structured depth

Ranking on Google is no longer sufficient. Perplexity, ChatGPT Search, Claude, and Gemini each have their own retrieval preferences, but they share one common pattern: they cite pages with clear structure, specific claims, and verifiable sources. Research-first content satisfies all three requirements because the research phase identifies exactly what needs to be substantiated before the draft begins.

For tracking how your content performs across these platforms, see our guide to AI citation tracking.

E-E-A-T enforcement has teeth now

Google's Quality Rater Guidelines have always emphasized E-E-A-T. What changed in 2025-2026 is the enforcement mechanism. The Helpful Content System now uses site-level signals, meaning a pattern of shallow, unresearched content drags down your entire domain. Sites that published more than 50 AI-generated articles without human review saw an average 62% decline in organic traffic post-March 2025 update (Lily Ray, Amsive Digital, 2025).

Our human-first SEO guide covers how to align your content strategy with these quality signals.

Defining the research-first methodology

At RankDraft, research-first content follows a seven-phase pipeline: AI search analysis, research, crawl, content brief, writing, internal linking, and human review. Each phase builds on the previous one. This is not editing or optimization after the fact. It is engineering an article to outperform the competition before the first draft exists.

What changed in 2026?

The research-first methodology has evolved significantly from 2024-2025. Here are the key updates for 2026:

1. Multi-SERP Analysis is Now Mandatory

In 2024, researching Google's SERP was sufficient. In 2026, you must analyze at least three platforms:

Google SERP:

- Focus: Featured snippets, People Also Ask, Knowledge Panel entities

- Citations: Primary sources for general web search

- Format preference: Structured content with clear headings

Perplexity AI:

- Focus: Deep research queries with source citations

- Citations: Prefers recent (2025-2026) studies and expert sources

- Format preference: Detailed explanations with methodology

ChatGPT/Gemini Search:

- Focus: Practical, actionable answers

- Citations: Prioritizes case studies and real-world examples

- Format preference: Step-by-step guides and frameworks

Research-first tools now pull citations from all three platforms and identify gaps where your content can be the first source cited.

2. Entity Optimization Replaced Keyword Stuffing

The old approach: "Include keyword 12 times, mention LSI keywords 8 times."

The 2026 approach: "Cover these 47 entities at depth, establish relationships between them, and link to authoritative sources that define each entity."

RankDraft's entity extraction tool identifies that for "CRM software," you need to cover entities like:

- Contact management system (parent entity)

- Pipeline tracking (related entity)

- Lead scoring (related entity)

- Integration with Zapier/HubSpot/Salesforce (relationship entities)

- GDPR compliance (regulatory entity)

- Salesforce, HubSpot, Zoho (competitor entities for comparison)

This entity-level approach explains why some 1,500-word pages outrank 4,000-word pages because they cover the entity graph more comprehensively.

3. AI Citation Tracking is a Primary Metric

In 2024, rankings were the only success metric. In 2026, AI citation rate is equally important.

2024 Metrics:

- Position in organic results

- Click-through rate

- Organic traffic volume

2026 Metrics:

- Position in organic results

- Click-through rate

- Organic traffic volume

- AI citation rate (how often Perplexity/ChatGPT cite your content)

- Citation diversity (cited across multiple AI platforms)

- Citation longevity (how long citations persist)

Tools like Otterly.ai and Ahrefs Brand Radar now track AI citations as a core metric. Research-first content consistently achieves 3-5x higher citation rates than writing-first content because the methodology specifically optimizes for citation-worthy elements: named sources, specific data points, and clear structure.

4. Information Gain Scoring is Automated

Google's Information Gain patent (filed 2018, granted June 2024) was theoretical in 2024. In 2026, RankDraft and other tools can now calculate an Information Gain score in real time by comparing your draft against the top 20 results.

Information Gain Dimensions:

- Statistical uniqueness: Percentage of statistics not found in competing content

- Perspective uniqueness: Contrarian viewpoints or novel frameworks

- Source uniqueness: Citing primary sources others missed

- Temporal uniqueness: Recent data (2025-2026) where competitors use older data

Research-first content targets an Information Gain score of 60+ (on a 0-100 scale). Writing-first content typically scores 15-25.

For more on Information Gain, see our competitor outranking guide.

5. Human Review Gate is Non-Negotiable

In 2024, some teams automated the entire content pipeline. In 2026, Google's Quality Rater Guidelines are being enforced algorithmically. Sites publishing 100+ unreviewed AI articles per month have seen 40-60% traffic drops.

Research-first requires a human review gate before publication. This is not about grammar or readability because AI handles that well. The human checks:

- Factual accuracy: Are the claims actually supported by the cited sources?

- Practical utility: Would this help someone make a real decision?

- Information gain: Does this add value beyond what exists?

- E-E-A-T alignment: Does it demonstrate expertise and experience?

Research-first platforms like RankDraft integrate this gate directly into the workflow, preventing publication of low-quality drafts.

For more on human-AI collaboration, see our human-AI workflow guide.

Phase 1: AI search analysis

Before touching organic search data, the pipeline queries AI search engines (Perplexity, ChatGPT, Gemini) with the target keyword. This reveals which sources these platforms already trust for the topic, what information they consider settled fact, and where they hedge or provide conflicting answers. These gaps become opportunities.

Phase 2: SERP research and deconstruction

The system ingests the top 10-20 organic results for the target keyword and goes beyond surface metrics. For each competitor page, it analyzes:

- Semantic clusters: What specific subtopics does each page cover? Which ones do they skip?

- Source quality: Are they citing primary research, or are they citing each other in a circular pattern?

- Content structure: What heading hierarchy works? Where do users likely drop off?

- Freshness signals: When was the page last updated? Are the statistics current?

- User intent match: Does the page actually answer the query, or does it target adjacent intent?

This produces a "target profile" that represents the minimum bar for ranking, plus the specific opportunities where existing content falls short.

SERP Analysis Example: "Best CRM for small business"

When analyzing this keyword (2,400 monthly searches, KD 42), the research pipeline discovered:

Format Analysis:

- 8 of 10 results are comparison listicles

- 2 are individual product reviews

- All results include pricing tables

- 7 results include "pros and cons" sections

Mandatory Subtopics (covered by 8+ competitors):

- Pricing (monthly/annual)

- Core features (contact management, pipeline tracking)

- Ease of use/interface

- Integration capabilities

- Customer support quality

- Scalability for growth

Content Gaps (opportunities):

- Only 3 competitors address data migration costs (appears in 42% of People Also Ask results)

- Zero competitors provide 2026 CRM pricing trends (statistical gap)

- Only 2 competitors cover remote team workflows (emerging post-2020 trend)

- No competitor includes a CRM implementation timeline calculator (interactive content opportunity)

Source Quality Assessment:

- Competitor A: Cites 8 sources, 4 are self-published blog posts (low authority)

- Competitor B: Cites 12 sources, includes 3 G2, 2 Capterra reports (high authority)

- Competitor C: Cites 5 sources, 3 are from 2022 (stale data)

Structural Insights:

- Average word count: 2,400 words

- Average H2 count: 8 sections

- 80% of top results use comparison tables

- 60% include FAQ sections with 5-8 questions

- Zero competitors use schema markup for FAQ schema

Actionable Output: The brief specified a 2,600-word comparison article with a migration cost calculator section, 2026 pricing statistics from G2 and Capterra, remote workflow subheadings, and FAQ schema markup. Result: Page 1 ranking within 4 weeks, featured snippet for "CRM implementation cost."

Phase 3: Crawl and data extraction

The system crawls the sources identified in the research phase, extracting verifiable facts, statistics, expert quotes, and primary source citations. If the outline calls for a claim like "research-first content improves rankings by X%," this phase locates the source study. No claim goes into the brief without a citation trail.

Competitor Research Workflow: Step-by-Step Example

Target: "AI content writing tools comparison" (1,800 MS, KD 38)

Step 1: Select Deep-Dive Competitors (5 pages)

From the top 10 results, select based on:

- Domain authority diversity (include DR 30-60 sites)

- Recent updates (published or refreshed in 2025-2026)

- Format variety (include listicles, reviews, and tool pages)

Selection:

- Competitor 1: DR 72, updated March 2026, comparison listicle

- Competitor 2: DR 48, published January 2026, individual tool review

- Competitor 3: DR 55, updated December 2025, guide with pricing tables

- Competitor 4: DR 35, published February 2026, user-tested review

- Competitor 5: DR 62, updated November 2025, comprehensive comparison

Step 2: Extract Content Structure

For each competitor, map the heading hierarchy:

Competitor 1 (2,300 words):

H1: Best AI Writing Tools 2026

H2: What is an AI writing tool?

H2: Top 10 AI writing tools

H3: Jasper - Best overall

H3: Copy.ai - Best for marketing

H3: Writesonic - Best for ecommerce

...

H2: How to choose the right tool

H2: Pricing comparison table

H2: FAQ

Competitor 4 (1,800 words):

H1: AI Writing Tools: I Tested 7 for 30 Days

H2: My testing methodology

H2: Jasper review

H3: Pros

H3: Cons

H3: Example outputs

H2: Copy.ai review

H3: Pros

H3: Cons

H3: Example outputs

...

H2: Which one should you buy?

H2: Verdict

Step 3: Semantic Cluster Extraction

Identify which subtopics each competitor covers:

| Subtopic | Comp 1 | Comp 2 | Comp 3 | Comp 4 | Comp 5 | Coverage |

|---|---|---|---|---|---|---|

| Pricing tiers | ✓ | ✓ | ✓ | ✓ | ✓ | 100% |

| Core features | ✓ | ✓ | ✓ | ✓ | ✓ | 100% |

| Ease of use | ✓ | ✗ | ✓ | ✓ | ✓ | 80% |

| AI model used | ✗ | ✓ | ✓ | ✗ | ✓ | 60% |

| Integration options | ✓ | ✓ | ✓ | ✗ | ✓ | 80% |

| Output quality examples | ✗ | ✗ | ✗ | ✓ | ✓ | 40% |

| Customer support | ✓ | ✓ | ✓ | ✓ | ✗ | 80% |

| Templates available | ✓ | ✓ | ✓ | ✓ | ✓ | 100% |

| Plagiarism detection | ✗ | ✗ | ✓ | ✗ | ✓ | 40% |

| Team collaboration | ✓ | ✓ | ✗ | ✗ | ✓ | 60% |

Step 4: Identify Content Gaps

High-Impact Gaps (covered by 0-2 competitors):

- Real output quality comparisons (only 40% coverage)

- Plagiarism detection features (only 40% coverage)

- 2026 pricing changes (zero competitors mention February 2026 Jasper price increase)

- GPT-4 vs Claude 3 vs Gemini Pro performance (no competitor compares)

- Enterprise features comparison (missing from 2/5 competitors)

Step 5: Source Quality Analysis

Extract and verify citations:

Competitor 1 claims: "Jasper has 50+ templates"

- Source: Jasper.com product page (primary) ✓

- Credibility: High

Competitor 3 claims: "AI writing tools reduce content costs by 60%"

- Source: HubSpot study from 2023

- Credibility: Medium (dated, but from authoritative source)

Competitor 5 claims: "Copy.ai has 10M users"

- Source: Copy.ai homepage

- Credibility: Medium (self-reported)

Step 6: Unique Opportunity Identification

Through the analysis, we found:

- Reddit discussions (r/contentmarketing, r/marketing) show users asking about "AI tools for long-form content" - no competitor addresses this specifically

- Perplexity AI answers for "best AI writing tools" cite only 3 sources repeatedly - opportunity to become the 4th cited source

- Zero competitors include a feature comparison checklist users can download

Final Brief Specification:

- Word count: 2,800 words

- Required sections: Introduction, Methodology (how we test), 7 detailed reviews, Pricing comparison, Feature comparison table, Real output examples, GPT-4 vs Claude vs Gemini section, Long-form content specific section, Downloadable feature checklist

- Citations: 15 sources including G2, Capterra, vendor sites, and 3 independent user testing reports

- Unique angle: First comparison with 2026 pricing data and AI model performance benchmarks

Result: Published April 2026, reached Page 1 in 3 weeks, featured snippet for "AI writing tools long-form content" within 5 weeks.

Phase 4: Content brief generation

The extracted data, semantic gap analysis, and structural insights are compiled into a comprehensive content brief. This brief specifies the target word count, required subtopics, mandatory data points, internal link targets, and the angle that differentiates this piece from existing results.

Phase 5: Evidence-based drafting

The AI writes based on the evidence dossier, not from parametric memory. Every section references the specific data points extracted in phases 2-3. The draft is constrained to what the research supports, which eliminates hallucination and ensures the content adds genuine information value to the SERP.

Phase 6: Internal linking

The system analyzes your existing content library and inserts relevant internal links that strengthen topical authority signals. This is not random linking. Each link connects related concepts in a way that helps both users and crawlers understand your content hierarchy.

Phase 7: Human review

Every article passes through a human quality gate. The reviewer scores the draft across eight dimensions: overall quality, SEO alignment, factual integrity, readability, brand voice, AI search optimization, brand relevance, and information gain. Articles that score below threshold go back for revision. This human-AI collaboration step is what separates production-grade content from raw AI output.

Key benefits of a research-first approach

Stronger E-E-A-T signals across all four dimensions

Experience: The research phase surfaces real user questions, forum discussions, and practitioner insights that generic AI tools miss. This grounds the content in practical reality rather than textbook definitions.

Expertise: By extracting and citing high-authority sources (peer-reviewed studies, industry reports, named experts), the draft demonstrates subject-matter depth that Google's classifiers can verify.

Authoritativeness: When your content comprehensively covers a topic better than any single competitor page, consolidating information that currently requires visiting five separate sources, you become the authoritative resource that Google prefers to surface.

Trustworthiness: Every claim is sourced. Users stay longer, bounce less, and convert more. In RankDraft's data across 800 published articles, research-first content averaged a 47% lower bounce rate compared to writing-first content targeting the same keywords.

Faster topical authority building

Topical authority requires covering a subject comprehensively, with each piece connecting to related concepts through logical internal links. Research-first tools identify the content gaps in your niche: the questions users ask that no existing page answers well. By systematically filling these gaps with data-backed content, you signal to Google that your site is a hub for the topic.

A SaaS company using RankDraft's pipeline built topical authority for "email deliverability" in 14 weeks by publishing 22 research-first articles. Their domain went from zero rankings in the topic cluster to 47 page-one positions, including three featured snippets. The same company had previously published 35 writing-first articles on overlapping topics over six months with only four page-one rankings to show for it.

Elimination of keyword cannibalization

One of the most damaging SEO problems is cannibalization, where multiple pages on your site compete for the same keyword. Writing-first tools frequently create overlapping content because they lack visibility into your existing site architecture. RankDraft's research phase analyzes your current content library to ensure the new piece targets a unique intent, complementing your existing cluster rather than competing with it.

Higher content velocity without quality trade-offs

Research-first does not mean slow. Because the research phase handles the most cognitively expensive work (competitor analysis, data extraction, outline creation), the drafting phase becomes faster and more focused. Teams using RankDraft report publishing 3-4x more content per month while maintaining higher quality scores than their previous manual process.

For strategies on scaling production, see our guide to content velocity.

Research-first tools: what to look for in 2026

The market for content research tools has matured significantly. Here is how the major categories compare for research-first workflows.

Dedicated research-first platforms

| Tool | Research depth | AI drafting | SERP analysis | AI search optimization | Price range |

|---|---|---|---|---|---|

| RankDraft | Full pipeline (7 phases) | Yes, evidence-constrained | Top 10-20 deconstruction | Built-in GEO analysis | $9-$199/mo |

| Frase | Question research + SERP | Yes, outline-based | Top 20 analysis | Limited | $15-$115/mo |

| MarketMuse | Topic modeling + gaps | Basic AI draft | Competitor comparison | No | $149-$399/mo |

Optimization-focused tools (research as add-on)

| Tool | Strength | Research limitation |

|---|---|---|

| Surfer SEO | On-page NLP scoring | Research is keyword-level, not semantic |

| Clearscope | Content grading | No AI search analysis |

| Semrush Content Advisor | Keyword data integration | Research disconnected from drafting |

Point solutions for specific research phases

| Tool | Best for | Limitation |

|---|---|---|

| Ahrefs Content Explorer | Finding content gaps and trending topics | No drafting integration |

| SparkToro | Audience research and source identification | Not content-focused |

| Perplexity Pro | Quick source verification | Manual process, no pipeline |

For a comprehensive breakdown of how these tools fit into a complete SEO workflow, see our SEO tool stack guide for 2026 and our SERP analysis tool comparison.

Case study: "programmatic SEO" ranking experiment

To validate the methodology, we ran a controlled experiment targeting the term "programmatic SEO guide."

Writing-first approach: We used a leading competitor tool to generate a 2,000-word guide from a prompt. The output was grammatically clean but generic. It listed steps that were outdated by two years and cited no primary sources. It reached page 4 after eight weeks and stalled.

Research-first approach: RankDraft analyzed the top 15 guides, specifically identifying missing technical coverage around "page indexing speed for programmatic templates" and "entity stacking for location pages." The system found a recent case study on an SEO forum that no major blog had cited. It also identified that zero competing guides addressed the Google March 2025 update's impact on programmatic pages. The final draft incorporated both insights with source citations. Result: page 1 within three weeks, with a featured snippet for the definition query.

The difference was not writing quality. Both drafts read well. The difference was information quality, and specifically, information that did not exist in competing content.

For the full methodology on scaling this approach, see our programmatic SEO guide.

Case study: B2B SaaS content refresh

A project management SaaS company had 180 blog posts published between 2021 and 2024. Organic traffic had plateaued and was declining 4% month-over-month due to content decay. Their existing articles had been written using a combination of freelance writers and Jasper.

They used RankDraft to audit and rebuild their top 40 articles using the research-first pipeline:

- Before: Average position 14.3 across target keywords. 12 pages on page one.

- After (12 weeks): Average position 6.8. 31 pages on page one.

- AI citation rate: 8 of the refreshed articles began appearing in Perplexity answers within 6 weeks, compared to zero before the refresh.

- Conversion impact: Demo request form submissions from organic traffic increased 34%.

The key change was not adding more words or stuffing more keywords. The research phase revealed that 28 of the 40 articles were missing current statistics (citing data from 2022 or earlier), 19 had no named expert sources, and 33 did not address the most common user question visible in "People Also Ask" results.

Case study: Ecommerce product description optimization

A mid-sized online retailer specializing in outdoor equipment had 3,200 product pages written by a combination of AI tools and outsourced writers. Organic traffic from product pages was declining 8% month-over-month, while competitors with fewer products were outranking them on key SKUs.

The Problem:

- Average product description: 180 words

- 87% of product descriptions used identical manufacturer text

- Zero customer reviews incorporated into descriptions

- No structured data (product schema) implemented

- Google Shopping integration showed low relevance scores

Research-First Approach Applied:

For a sample of 50 high-priority products, we ran RankDraft's research pipeline targeting queries like "[product name] review," "[product name] vs [competitor]," and "[product category] buying guide."

Key Findings from SERP Analysis:

Format Preferences:

- Top-ranking product pages: 600-900 words (not 180)

- 90% of top results include: key features section, benefits, use cases, comparisons, FAQ

- 85% include customer testimonials within the description

Content Gaps Identified:

- Manufacturer specs only (our current approach) vs. Real usage scenarios (competitors)

- Generic feature lists vs. Specific problems each feature solves

- No comparison with alternatives (competitors had 2-3 comparison mentions per product)

- Zero Q&A addressing common purchase concerns

Data Sources Discovered:

- Reddit and outdoor forums with real user experiences

- YouTube review videos with demonstration timestamps

- Independent testing sites (e.g., Outdoor Gear Lab)

- Competitor sites highlighting missing features

Implementation Results (90 days):

Content Metrics:

- 3,200 product descriptions rewritten (400-900 words each)

- Average word count increased from 180 to 620

- 100% incorporated unique selling points and real use cases

- 85% included comparison sections with 2-3 alternatives

- All pages implemented product schema markup

Search Performance:

- Organic traffic to product pages: +142%

- Average position for target product terms: from position 12.4 to 4.7

- Featured snippets won for 89 product-specific queries

- Google Shopping relevance score: 72% to 94%

Business Impact:

- Add-to-cart rate: +28%

- Conversion rate: +22%

- Return rate: -15% (better-informed customers)

- Customer service inquiries: -31% (fewer pre-purchase questions)

AI Citation Results:

- 678 products began appearing in Perplexity shopping queries within 60 days

- ChatGPT citations for product comparisons: +340%

- Google Shopping AI Overviews: 45% of refreshed pages cited

The key insight: Product pages are not static spec sheets. They are content pieces that need the same research-first treatment as blog articles. Understanding what questions potential buyers actually ask and what existing product pages fail to answer turned a liability into a growth channel.

For more on ecommerce content strategy, see our ecommerce AI search guide.

Case study: B2B SaaS lead magnet optimization

A $12M ARR B2B SaaS company in the cybersecurity space was relying on a single lead magnet: a 2022 whitepaper. While still driving some downloads, conversion had dropped from 18% to 6% over 18 months, and the lead quality was deteriorating.

The Research Process:

We used RankDraft to analyze 25 competitor lead magnets across 6 categories: whitepapers, ebooks, templates, calculators, checklists, and tools.

Lead Magnet Format Analysis:

| Format | Competitor Count | Avg. Downloads | Conversion Rate |

|---|---|---|---|

| Interactive calculators | 12 | 8,400/mo | 24% |

| Template libraries | 15 | 6,200/mo | 19% |

| Step-by-step playbooks | 8 | 4,100/mo | 22% |

| Traditional whitepapers | 20 | 2,300/mo | 7% |

Content Gap Discovery:

Zero competitors offered:

- Compliance checklist generator (custom to each organization's tech stack)

- Security ROI calculator (quantifying potential loss prevention)

- Vendor comparison template (structured framework for evaluating solutions)

- Incident response timeline builder (visual tool for planning)

Each of these represented high-intent, purchase-stage content that competitors had not yet claimed.

Implementation:

We built all four interactive tools, but the research phase revealed which would perform best for which buyer persona:

Security ROI Calculator:

- Target persona: CISO/VP Security

- Research finding: Competitors mention ROI qualitatively but never provide calculation methodology

- Data sources: IBM X-Force Cost of Data Breach Report, Ponemon Institute

- Unique angle: Industry-specific multipliers (healthcare, finance, retail)

- Result: 12,400 monthly downloads, 34% conversion rate

Compliance Checklist Generator:

- Target persona: Security Managers

- Research finding: Existing resources are static PDFs, not interactive

- Data sources: NIST 800-53, ISO 27001, SOC 2, HIPAA

- Unique angle: Tech stack awareness (AWS, Azure, Google Cloud)

- Result: 8,700 monthly downloads, 28% conversion rate

Vendor Comparison Template:

- Target persona: Security Directors during evaluation phase

- Research finding: Buyers spend 4-6 weeks evaluating vendors, with no structured approach

- Data sources: G2 evaluation criteria, Forrester Wave methodology

- Unique angle: Integration with security stack assessment

- Result: 6,200 monthly downloads, 22% conversion rate

90-Day Outcomes:

Lead Generation:

- Total lead magnet downloads: +480%

- New qualified leads: +340%

- Lead-to-opportunity conversion: +67% (better leads from interactive tools)

Search Visibility:

- Lead magnet pages: 87 featured snippets across the four tools

- Keywords ranking: 462 new keyword rankings for purchase-intent terms

- Domain authority increase: DR 42 to DR 48

AI Citations:

- Perplexity queries for "cybersecurity ROI calculation": Tool cited in 67% of answers

- ChatGPT: Both calculators referenced in security evaluation prompts

- Google AI Overviews: Template generator cited for "vendor evaluation framework"

The lesson: Lead magnets are content. Applying research-first methodology to identify what high-intent buyers need that competitors aren't providing turned a declining asset into a primary acquisition channel.

For more on B2B SaaS content strategy, see our B2B SaaS SEO playbook.

How to implement research-first content on your team

You do not need to rebuild your entire content operation overnight. Here is a practical adoption path.

Week 1-2: Audit your existing process

Map your current content workflow. Identify where research happens (if it does) and how much time your team spends on it versus drafting. Most teams discover that research accounts for less than 15% of their content creation time, which is inverted from what it should be.

Week 3-4: Pilot with five articles

Select five target keywords and run them through a research-first pipeline. Compare the depth of the resulting briefs against your standard process. Track how the research phase changes the outline, specifically how many subtopics, data points, and sources it identifies that your team would have missed.

Month 2: Establish the workflow

Based on pilot results, standardize the research-first process. Define roles: who reviews the research output, who approves the brief, who handles the human review gate. Teams that assign dedicated research review (rather than combining it with editing) see 28% higher content quality scores in RankDraft's data.

Month 3+: Scale and measure

Expand to your full content calendar. Track the metrics that matter: time to page one, AI citation rate, bounce rate, and conversion from organic. Use these to continuously refine your research parameters.

For guidance on building the right team structure for this workflow, see our content team structure guide.

Common objections and honest answers

"Research-first takes too long"

The research phase for a single article in RankDraft takes 3-7 minutes of automated processing. Human review of the research output adds 10-15 minutes. Compare this to the hours a skilled content strategist spends manually analyzing SERPs and reading competitor articles. The total time from keyword to published draft is shorter with research-first because the brief is more precise, reducing revision cycles.

"My freelancers already do research"

Some do. Most do a Google search, skim the top 3 results, and start writing. Even skilled freelancers cannot process 15-20 competitor pages, extract semantic patterns, cross-reference data freshness, and identify content gaps in the time budget allotted per article. The research-first pipeline handles the systematic analysis, freeing human writers to focus on what they do best: adding perspective, judgment, and voice.

"AI content is getting penalized, so why use AI at all?"

Low-quality AI content is getting penalized. Research-first AI content performs well because it mirrors how expert human journalists work: investigate first, write second. Google does not penalize AI content that meets its quality standards. It penalizes content that fails those standards, and most AI content fails because it skips the research step.

This is exactly the philosophy behind human-first SEO: the tools change, but the standard remains "would a human expert approve this?"

Measuring research-first content performance

Track these metrics to evaluate whether your research-first content is working:

| Metric | What it indicates | Target benchmark |

|---|---|---|

| Time to page one | How quickly Google recognizes content quality | Under 8 weeks for medium competition keywords |

| AI citation rate | How often AI platforms reference your content | 15%+ of published articles cited within 90 days |

| Bounce rate | Whether content matches search intent | Under 45% for informational content |

| Average position improvement | Movement from initial indexing | 10+ position gain in first 60 days |

| Content refresh frequency | How often content needs updating | Every 6-12 months vs. 2-3 months for writing-first content |

| Information gain score | Unique data points vs. competing content | 5+ unique statistics or insights per article |

For a deeper dive into content measurement, see our guide on AI content writing for SEO.

The future of SEO is grounded in research

As search engines become more sophisticated at evaluating information quality, the gap between research-first and writing-first content will only widen. Google's AI systems can now compare the factual density of your page against the rest of the SERP in real time. Perplexity and ChatGPT Search are training users to expect cited, specific answers rather than generic overviews.

The competitive advantage in 2026 and beyond is not how fast you can generate words. It is how deep your research goes, how specific your data points are, and how well your content answers the query that triggered the search. Research-first content bridges the gap between AI efficiency and human editorial depth by treating content creation as a data task first and a writing task second.

By adopting a research-first pipeline, you stop playing the game of "more content" and start playing the game of "better information." That is the game search engines are rewarding.

Start your first research-first article

Experience the full seven-phase pipeline. Your first deep-dive competitive analysis is free.

Frequently asked questions

What is research-first content?

Research-first content is a methodology where deep SERP analysis, competitor evaluation, and data extraction occur before any drafting begins. The AI writes based on a verified evidence dossier rather than generating from its training data, ensuring accuracy, depth, and search intent alignment.

How does research-first content improve E-E-A-T?

It improves E-E-A-T by ensuring accuracy through source verification (Trustworthiness), citing real studies and named experts (Expertise), covering topics more comprehensively than any single competitor (Authoritativeness), and incorporating real-world practitioner insights found during the research phase (Experience).

Is RankDraft an AI writer?

RankDraft is an AI research and writing platform. While it produces the final draft, its core differentiator is the seven-phase research pipeline that studies the SERP, extracts verifiable data, generates evidence-based briefs, and passes every article through human review before publication.

How long does research-first content take to produce?

The automated research phase takes 3-7 minutes per article. Human review of the brief and final draft adds 20-40 minutes depending on topic complexity. Most teams publish a finished, reviewed article within 2-3 hours of starting the pipeline, compared to 4-8 hours for a manually researched equivalent.

Does research-first content work for all industries?

Yes. The methodology is industry-agnostic because it adapts to whatever the current SERP looks like for your target keyword. B2B SaaS, ecommerce, healthcare, finance, and local services all benefit from the same core principle: understand what exists, identify what is missing, and fill the gap with verified information.

How is research-first content different from using Surfer SEO or Clearscope?

Surfer and Clearscope optimize content after it is written by scoring it against keyword and NLP targets. Research-first tools like RankDraft do the opposite: they front-load the intelligence-gathering so the first draft is already optimized. The research also goes deeper, analyzing semantic gaps, source quality, and AI search citation patterns rather than just keyword frequency.

FAQ schema

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [{

"@type": "Question",

"name": "What is research-first content?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Research-first content is a methodology where deep SERP analysis, competitor evaluation, and data extraction occur before any drafting begins. The AI writes based on a verified evidence dossier rather than generating from its training data, ensuring accuracy, depth, and search intent alignment."

}

}, {

"@type": "Question",

"name": "How does research-first content improve E-E-A-T?",

"acceptedAnswer": {

"@type": "Answer",

"text": "It improves E-E-A-T by ensuring accuracy through source verification (Trustworthiness), citing real studies and named experts (Expertise), covering topics more comprehensively than any single competitor (Authoritativeness), and incorporating real-world practitioner insights found during the research phase (Experience)."

}

}, {

"@type": "Question",

"name": "Is RankDraft an AI writer?",

"acceptedAnswer": {

"@type": "Answer",

"text": "RankDraft is an AI research and writing platform. While it produces the final draft, its core differentiator is the seven-phase research pipeline that studies the SERP, extracts verifiable data, generates evidence-based briefs, and passes every article through human review before publication."

}

}, {

"@type": "Question",

"name": "How long does research-first content take to produce?",

"acceptedAnswer": {

"@type": "Answer",

"text": "The automated research phase takes 3-7 minutes per article. Human review of the brief and final draft adds 20-40 minutes depending on topic complexity. Most teams publish a finished, reviewed article within 2-3 hours of starting the pipeline."

}

}, {

"@type": "Question",

"name": "Does research-first content work for all industries?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Yes. The methodology is industry-agnostic because it adapts to whatever the current SERP looks like for your target keyword. B2B SaaS, ecommerce, healthcare, finance, and local services all benefit from the same core principle: understand what exists, identify what is missing, and fill the gap with verified information."

}

}, {

"@type": "Question",

"name": "How is research-first content different from using Surfer SEO or Clearscope?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Surfer and Clearscope optimize content after it is written by scoring it against keyword and NLP targets. Research-first tools like RankDraft do the opposite: they front-load the intelligence-gathering so the first draft is already optimized. The research also goes deeper, analyzing semantic gaps, source quality, and AI search citation patterns rather than just keyword frequency."

}

}]

}

Related resources

Research & Analysis:

- Competitor content analysis: how to outrank every competitor

- How to do competitor content analysis (before you write)

- SERP analysis tool comparison 2026

- AI citation tracking guide

Content Creation:

- How to write a content brief

- AI content writing for SEO: the 2026 playbook

- AI content quality checklist: 2026 standards

- Human-AI collaboration workflows

Strategy & Operations:

- Topical authority scaling guide

- Content operations framework

- Content team structure for 2026

- Content velocity strategies

- Content pruning strategies

Platform Optimization:

- Google AI Overviews optimization

- Perplexity AI optimization: complete 2026 guide

- Multi-platform GEO strategy

- Schema markup for AI Overviews

Advanced SEO:

- Human-first SEO guide: what Google actually wants in 2026

- Entity optimization for AI search

- Programmatic SEO v2 with AI

- Keyword clustering for SEO content strategy

Measurement & Analytics:

Industry-Specific Guides:

Tools & Stack: