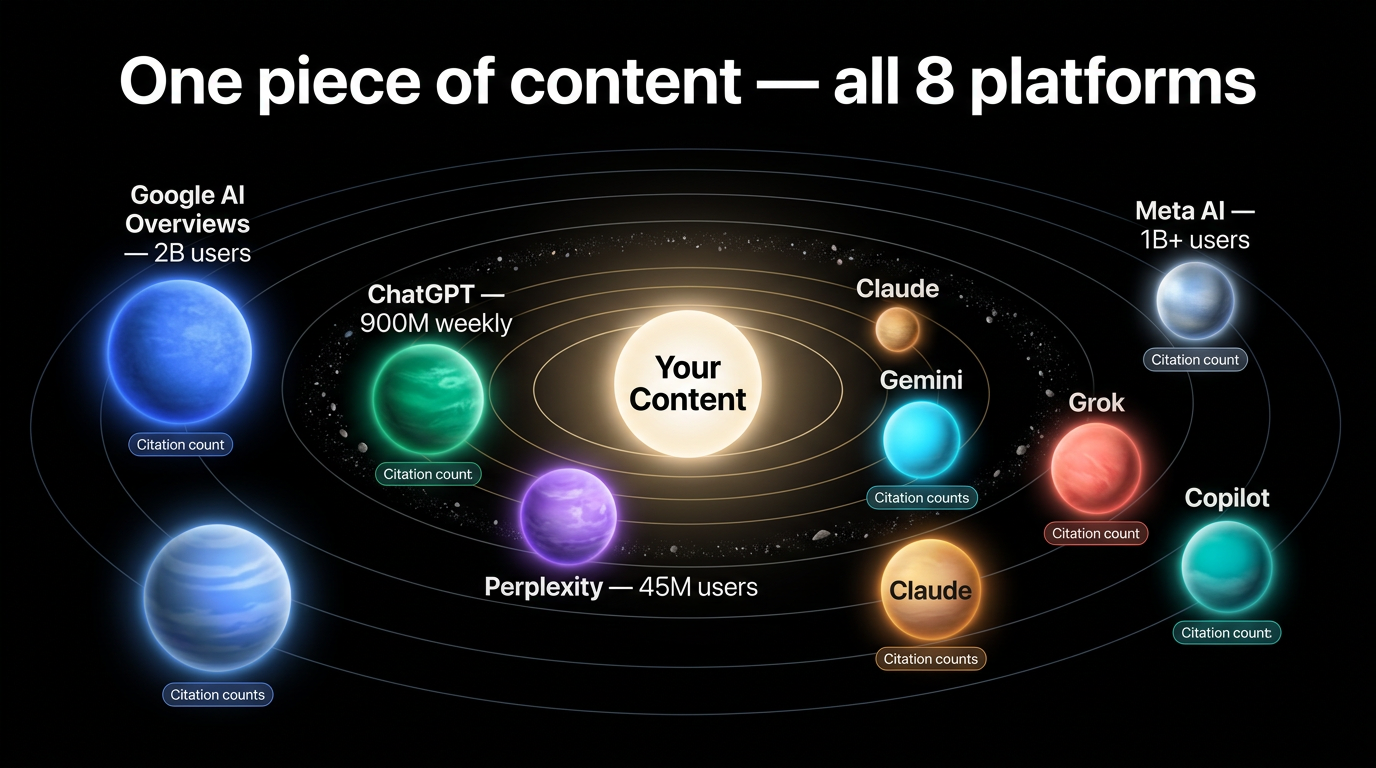

AI search has fragmented across six or more platforms, and each one selects sources differently. Google AI Overviews reach 2 billion monthly users. ChatGPT processes over 1 billion questions per day. Perplexity handles 780 million search queries per month. Meta AI crossed 1 billion monthly active users in early 2026. Optimizing for one platform means leaving the others on the table.

A multi-platform GEO (Generative Engine Optimization) strategy lets you write content once and get cited across all of them. This guide covers how each AI engine selects sources, what content formats they favor, and how to build a unified optimization workflow that works everywhere without creating separate versions for each platform.

The AI search landscape in 2026

The AI search market now has seven platforms with meaningful user bases. Each serves different audiences, different query types, and different use cases. Here is what the landscape looks like as of Q1 2026.

Platform usage and reach

Google AI Overviews and AI Mode

- 2 billion monthly users across 200+ countries

- AI Overviews appear in 13-26% of US searches, up to 39.4% for informational queries

- Google AI Mode hit 100+ million active users in the US and India, processing over 1 billion monthly queries

- Users spend 49 seconds per session in AI Mode vs. 21 seconds in AI Overviews

- 93% of AI Mode sessions end without a click to an external website (SparkToro, Q1 2026)

ChatGPT

- 800 million to 1 billion weekly active users (Sam Altman, January 2026)

- 190.6 million daily active users

- 5.35 billion monthly website visits (SimilarWeb, February 2026)

- 80.49% market share of AI chatbot referral traffic (Datos/Semrush, January 2026)

- Handles 1 billion+ questions per day

Perplexity

- 30-45 million monthly active users

- 780 million search queries per month

- 170 million monthly website visits

- 800% year-over-year growth (Business of Apps, 2026)

- Projected $656 million ARR

Claude

- 18.9 million monthly active web users, 7.38 million mobile MAU (Backlinko, Q1 2026)

- 202.9 million monthly visits

- 70% of Fortune 100 companies use Claude

- 300,000+ business customers

Google Gemini

- 650 million monthly active users (second-largest AI platform by MAU)

- Deep integration with Google Workspace and Android

Meta AI

- 1 billion+ monthly active users (crossed May 2025, doubled in 8 months)

- 40 million daily active users

- Embedded in Facebook, Instagram, WhatsApp, and Messenger across 60+ countries

Grok (xAI)

- ~30 million monthly active users

- Real-time access to X (formerly Twitter), processing ~500 million posts per day

- Strong on trending topics and real-time information

Microsoft Copilot

- 33 million active users across all Copilot surfaces

- 15 million paid seats (Q2 FY2026), 160% year-over-year growth

- Integrated into Edge browser, Windows, and Microsoft 365

Who uses which platform, and for what

Each platform attracts different query patterns. Understanding these patterns shapes where your content needs to perform.

Google AI Overviews and AI Mode users run traditional searches and expect quick answers blended into familiar search results. They click through to sources more than users on any other AI platform, and they skew toward transactional and navigational intent.

Perplexity users tend to run research queries: product comparisons, pricing lookups, and factual verification. They expect inline citations and click through to verify claims. Perplexity's citation CTRs run 15-25%, higher than Google featured snippets (Semrush, 2026).

ChatGPT and Claude users engage in multi-turn conversations. ChatGPT users lean toward broad research and creative tasks. Claude users skew toward technical analysis, academic research, and complex topics requiring nuance.

Meta AI users are discovery-oriented. They encounter AI answers inside social feeds rather than actively searching. Grok users want real-time commentary and trending topic context.

For a detailed comparison of how each engine selects sources, see our AI search engine comparison for 2026.

How each platform selects and cites sources

Otterly.AI analyzed over 1 million AI citations across ChatGPT, Perplexity, and Google AI Overviews in January-February 2026. The findings show that no single content type or source dominates across all platforms.

Citation patterns by platform

Google AI Overviews favor brand visibility and established domains. Reddit is the most-cited source at 2.2% of citations. Google weights E-E-A-T signals heavily, and pages with schema markup have a 2.5x higher chance of appearing in AI-generated answers (Stackmatix, 2025). Google cites 3-5 sources per overview.

Perplexity leans heavily on social and community sources: 31% of citations come from social platforms, with Reddit alone accounting for 24% (Otterly.AI, 2026). Perplexity provides inline linked citations and favors structured, extractable content. It cites 3-7 sources per response and updates in real time.

ChatGPT cites Wikipedia most frequently at 7.8% of total citations (Otterly.AI, 2026). It favors Reddit, news sites, and encyclopedic content. ChatGPT mentions brands frequently but provides weaker link citations compared to Perplexity. It cites 2-5 sources per response.

Claude cites fewer sources overall but weights primary research, academic papers, and expert analysis more heavily. It cites 3-7 sources per response and tends toward balanced, multi-perspective answers.

The Google ranking fallacy

A finding from Otterly.AI's study that changes how teams should think about GEO: the top-10 Google citation rate dropped from 76% to 38%. Ranking on page one of Google no longer guarantees that AI engines will cite your content. AI platforms make independent source selection decisions, so a dedicated multi-platform approach is necessary.

What content formats get cited most

Bradlee Bartlett's analysis of AI citation patterns found clear format preferences:

- Listicles account for 21-60% of all AI citations depending on platform and query type

- 80% of pages cited by ChatGPT include lists to structure information

- Pages with sections of 120-180 words between headings receive 70% more ChatGPT citations than pages with sub-50-word sections

- Pages with clear H2/H3 hierarchy and bullet point structures are 40% more likely to be cited

- Content with 3+ schema types has 13% higher citation likelihood

- FAQPage schema improves AI citation rates by 30% on average (Stackmatix, 2025)

- Comparative and evaluative content (head-to-head comparisons, cost breakdowns, performance rankings) sits at the top of the citation hierarchy for decision-support queries

Community platforms capture 52.5% of all AI citations vs. 47.5% for brand domains (Wellows, 2026). This means your content competes not just with other brands but with Reddit threads, Quora answers, and forum discussions.

Building unified content that works everywhere

The most efficient approach is one piece of content optimized for all platforms simultaneously. This section covers the specific structure and elements that perform across engines.

The universal content framework

Based on the citation research above, this structure hits the extraction patterns of every major AI engine:

H1: Primary keyword + clear topic framing

Opening section (2-3 paragraphs): Direct answer to the core question. This is what Google AI Overviews and ChatGPT extract first. Lead with the answer, not background.

Comparison or data table: Structured data that Perplexity extracts directly. Include 5-7 criteria across 5-10 items with specific numbers (prices, percentages, dates).

Detailed analysis (5-7 H3 subsections): Comprehensive coverage with 120-180 words per section. This depth satisfies Claude's preference for nuanced analysis, ChatGPT's preference for completeness, and Google's authority signals.

Statistics and data section: 20+ data points with named sources. Perplexity and ChatGPT cite specific numbers frequently. Name the source for every statistic (e.g., "Semrush's 2026 AI Search Report found...").

Expert analysis and research: Primary source citations, named researchers, and specific findings. Claude weights this heavily. Google's E-E-A-T framework rewards it.

Actionable recommendations (10+ items): Bullet-pointed, specific steps. Include numbers where possible ("update pricing tables monthly" not "keep content fresh").

Case studies (3+): Real examples with before/after data. All platforms cite concrete examples over abstract advice.

FAQ section (20-25 Q&A pairs): Extraction-friendly for Perplexity, long-tail keyword coverage for Google, conversational entry points for ChatGPT and Claude.

Platform-specific elements within unified content

Instead of separate content for each platform, add these elements within your single piece:

For Google AI Overviews:

- Author bio with credentials and publication date

- Schema markup: Article, FAQPage, and Organization at minimum (pages with 3+ schema types get 13% more citations)

- Entity optimization with consistent entity references throughout

- Authoritative external source citations (academic journals, major publications)

For Perplexity:

- Comparison tables with specific numbers in every cell

- 25+ FAQ entries (Perplexity extracts these directly as answer cards)

- Numbered step-by-step lists (7-12 steps)

- Monthly content freshness signals (Perplexity's real-time index rewards recent updates)

For ChatGPT:

- 3,000+ total word count with narrative flow connecting sections

- Comprehensive coverage that addresses edge cases and follow-up questions

- 5-10 external authoritative source citations

- Lists within prose sections (80% of ChatGPT-cited pages include lists)

For Claude:

- Academic research citations with named researchers and publication dates

- Balanced perspectives that address counterarguments

- Primary source materials over secondary summaries

- Nuanced analysis that acknowledges limitations

The optimization matrix

| Content element | Google AIOs | Perplexity | ChatGPT | Claude |

|---|---|---|---|---|

| Introduction | 2-3 paragraphs with E-E-A-T signals | 1-2 paragraphs, direct answer first | 2-3 paragraphs, comprehensive framing | 2-3 paragraphs, nuanced with context |

| Comparison table | Include for comparison queries | Required, specific numbers in every cell | Include for structure | Include for data analysis |

| Section depth | 5-7 H3s, 150+ words each | 5-7 H3s, direct and extractable | 5-7 H3s, 120-180 words each | 5-7 H3s, nuanced with caveats |

| FAQ section | 15-20 questions | 25+ questions | 15-20 questions | 15-20 questions |

| Data points | 15-20 with named sources | 20+ specific numbers | 15-20 with context | 15-20 research-backed |

| Schema markup | Article + FAQPage + Organization | Not directly used but helps crawlability | Not directly used | Not directly used |

| Minimum word count | 2,500 | 2,000-2,500 | 3,000 | 2,500-3,000 |

| Update frequency | Quarterly | Monthly | Quarterly | Quarterly |

Research workflow for multi-platform GEO

RankDraft's research-first methodology adapts to multi-platform optimization by analyzing all engines before writing.

Step 1: Multi-SERP analysis

Search your target keyword on all four primary platforms (Google, Perplexity, ChatGPT with web search, Claude with web search). For each platform, document:

- Which domains get cited (track the top 10 sources per platform)

- What content format appears (table, list, narrative, FAQ)

- What specific information gets extracted

- Where the gaps are (information missing from current top results)

Concrete example: "best project management tools for remote teams"

Google AI Overviews pulled comparison tables from TechRadar and PCMag, with pricing data from vendor sites. Perplexity cited Reddit threads (r/projectmanagement) for user opinions, G2 for ratings, and Zapier's blog for feature comparisons. ChatGPT synthesized Wikipedia entries, vendor documentation, and Gartner's 2025 PM market guide. Claude referenced Harvard Business Review's remote work studies and MIT Sloan research on distributed team coordination.

The gap: no single source combined structured pricing comparisons (Perplexity's preference), academic remote-work research (Claude's preference), comprehensive feature analysis (ChatGPT's preference), and authoritative sourcing with author credentials (Google's preference).

Step 2: Gap identification

Map gaps across platforms into a single list. Common patterns:

- Perplexity gaps: missing comparison tables with specific numbers, missing FAQ sections, outdated pricing data

- Claude gaps: missing academic research citations, missing balanced counterarguments, missing primary source references

- ChatGPT gaps: insufficient depth on subtopics, missing narrative connecting sections, lacking comprehensive edge-case coverage

- Google gaps: missing E-E-A-T signals (author bio, credentials, dates), missing schema markup, weak internal link structure

Step 3: Unified structure design

Build one content outline that fills every gap from step 2. Assign platform-priority tags to each section so writers know which elements are non-negotiable.

Step 4: Write, then layer platform-specific elements

Write the core content first, targeting 3,000+ words of substantive analysis. Then layer in:

- Tables and numbered lists (Perplexity extraction)

- Schema markup and author bio (Google E-E-A-T)

- Academic citations and balanced perspectives (Claude)

- Comprehensive FAQ section (all platforms)

This process adds roughly 20% more effort compared to single-platform optimization, but reaches 4-7x more AI search surfaces.

Measuring multi-platform performance

Tracking AI search visibility across platforms requires dedicated tools. Manual citation tracking alone does not scale.

GEO tracking tools (2026)

Several platforms now track AI search citations across multiple engines:

- Otterly.AI (G2's Top AEO Platform 2026): tracks ChatGPT, Google AI Overviews, Perplexity, Copilot, and Gemini. Plans start at $29/month for 15 tracked prompts, $189/month for 100 prompts, and $489/month for 400 prompts.

- Peec AI: tracks 10+ LLMs including Grok and Google AI Mode. Enterprise-grade with API access. Pricing requires a demo.

- Scrunch AI: starts at $300/month, focused on brand appearance tracking across LLM outputs.

- SE Ranking Visible: multi-platform AI visibility tracking integrated into existing SE Ranking dashboards.

- RankScale: entry-level option at ~$20/month for basic AI citation monitoring.

For a complete breakdown of citation tracking approaches, see our AI citation tracking guide.

Metrics to track per platform

Google AI Overviews:

- AIO impressions via Google Search Console's new AI Overviews filter

- Citation position (first source vs. third source in an overview)

- Organic CTR change when AIOs appear (expect 0.61% CTR vs. 1.62% without AIOs, per Datos 2026 data)

Perplexity:

- Citation count per tracked query (tools like Otterly.AI automate this)

- Referral traffic from

perplexity.aiin Google Analytics - Citation CTR (Perplexity averages 15-25%, higher than Google featured snippets)

ChatGPT:

- Referral traffic from

chat.openai.comandchatgpt.com - Brand mention frequency in ChatGPT responses (Scrunch AI or manual sampling)

- ChatGPT drives 78.16% of all AI chatbot referral traffic (Datos, March 2026)

Claude:

- Referral traffic from

claude.ai - Citation frequency in Claude responses (manual sampling or Peec AI)

- Claude's referral share grew from 1.37% to 2.91% between February and March 2026 (MediaPost)

AI search referral traffic share (March 2026)

| Platform | Share of AI referral traffic |

|---|---|

| ChatGPT | 78.16% |

| Google Gemini | 8.65% |

| Perplexity | 7.07% |

| Microsoft Copilot | 3.19% |

| Claude | 2.91% |

| DeepSeek | 0.02% |

Source: Datos/Semrush, March 2026

Attribution and conversion tracking

AI search visitors convert at significantly higher rates than traditional organic search visitors. Semrush's 2026 AI search study found:

- AI search visitors convert at 4.4x the rate of traditional organic (14.2% vs. 2.8%)

- ChatGPT referral traffic converts at 15.9%

- Perplexity referral traffic converts at 10.5%

- Perplexity inline citations convert at 11x the rate of traditional organic search (the highest ROI per citation earned)

- Brands cited prominently in AI answers see a 156% increase in direct branded search queries

These numbers mean that even small citation gains on AI platforms can produce outsized revenue impact. For a deeper dive on measuring content ROI across AI platforms, see our ROI measurement guide.

Common multi-platform mistakes

Creating separate content for each platform

Some teams write "Best CRM (Perplexity version)" and "Best CRM (Google version)" as separate pages. This quadruples production effort, creates duplicate content problems, and splits link equity. Write one unified piece with platform-specific elements layered in. The extra effort is ~20%, not 300%.

Ignoring platform differences entirely

Writing for Google only and assuming it works everywhere. It does not. Perplexity needs structured tables with specific numbers. Claude needs academic citations. ChatGPT needs 3,000+ word depth. Content that ranks #1 on Google can still get zero AI citations on other platforms, because the top-10 Google citation rate dropped to 38% (Otterly.AI, 2026).

Over-indexing on traffic volume

Google sends 190x more referral traffic than ChatGPT despite ChatGPT having 12% of Google's search volume (ALM Corp, 2026). But ChatGPT referral traffic converts at 15.9% vs. Google organic at 2.8%. A team that allocates 100% of optimization effort to Google because "it has the most traffic" misses the platforms where each visitor is worth 4-5x more.

Not tracking cross-platform performance

Only 12% of marketing teams have a documented GEO strategy (Incremys, 2026). Most teams track Google Search Console and nothing else. Without cross-platform tracking, you cannot identify which platform is actually driving revenue or where your content has gaps. Start with one of the tracking tools listed above, even at the $29/month tier.

Using a single refresh cadence

Perplexity's real-time index rewards monthly content updates. Google, ChatGPT, and Claude are fine with quarterly refreshes. Teams that refresh everything on a single quarterly schedule lose Perplexity citations because competitors with fresher content get cited instead. For a full guide on refresh timing, see our content refresh strategies for 2026.

Advanced multi-platform strategies

Content clusters with cross-platform optimization

Build topical authority through content clusters where each piece is optimized for all platforms, and the cluster collectively covers every angle AI engines look for.

Structure:

- Pillar page: comprehensive guide optimized for all platforms (3,500+ words, all platform-specific elements)

- Supporting page 1: comparison/pricing piece (Perplexity-optimized tables, monthly updates)

- Supporting page 2: research deep-dive (Claude-optimized academic citations)

- Supporting page 3: step-by-step tutorial (ChatGPT-optimized depth and narrative flow)

- All pages internally linked to each other

When one piece in the cluster gets cited by an AI engine, the internal links increase the likelihood that the engine discovers and cites other pieces in the cluster.

Real-time data integration for Perplexity freshness

Perplexity's real-time index checks content freshness more aggressively than any other platform. Teams that pull pricing data from APIs and update statistics automatically get a structural advantage. If your CMS supports dynamic data injection, use it for:

- Pricing tables (update from vendor APIs)

- Market statistics (update from data sources)

- "Last updated" timestamps (update on every data refresh)

This keeps Perplexity citing your content without manual monthly updates.

Cross-platform citation networks

Otterly.AI's data shows that when one piece in a domain gets cited, other pages on that domain are more likely to get cited in related queries. Build on this by creating interlinked content that covers adjacent topics:

- If your CRM guide gets cited, your CRM pricing page and CRM implementation guide are more likely to appear in follow-up queries

- Internal links between related guides help AI crawlers discover your full content library

- Consistent entity references across pages strengthen your domain's topical signals

Optimizing for emerging platforms

Google AI Mode, Meta AI, and Grok are growing fast but have limited tracking infrastructure today. Practical steps:

- Google AI Mode: optimize the same way you do for AI Overviews (same engine, conversational interface). Note that ads appear in 25.5% of AI Mode results, up 394% year-over-year, so paid placements may complement organic GEO.

- Meta AI: focus on content that performs well in discovery contexts (social-shareable data, visual-friendly statistics). Meta AI currently does not drive significant referral traffic to external websites, but that may change as it adds source citation.

- Grok: ensure your content covers real-time and trending aspects of your topic. Grok pulls from X posts, so having an active X presence with links to your content helps.

Resource allocation for multi-platform GEO

Time investment comparison

Single-platform (Google only), 100 hours:

- Research: 10 hours

- Google-optimized content: 80 hours

- Tracking: 10 hours

Multi-platform unified, 120 hours (20% more):

- Multi-SERP research across all platforms: 15 hours

- Universal content creation: 55 hours

- Platform-specific elements (tables, schema, citations, FAQ expansion): 25 hours

- Cross-platform tracking setup and monitoring: 15 hours

- Monthly Perplexity freshness updates: 10 hours

The 20% additional investment reaches 4-7x more AI search surfaces.

Platform priority weighting

Weight optimization effort by conversion value, not just traffic volume:

Traffic-weighted allocation:

- Google: 50%

- ChatGPT: 20%

- Perplexity: 15%

- Gemini: 8%

- Claude + Copilot + others: 7%

Conversion-weighted allocation (based on 2026 referral conversion data):

- Perplexity: 30% (10.5% conversion rate, 11x organic ROI per citation)

- ChatGPT: 25% (15.9% conversion rate, highest absolute traffic)

- Google: 25% (largest reach, lower per-visit conversion)

- Claude: 12% (growing share, high-quality B2B visitors)

- Others: 8%

Your actual allocation should depend on your audience. B2B companies with technical buyers may want to weight Claude and Perplexity more heavily. B2C brands with broad audiences should weight Google and ChatGPT.

Multi-platform GEO checklist

Before publishing, verify every item:

Content quality:

- Direct answer to the core question in the opening 2-3 paragraphs

- 3,000+ words of substantive analysis (not padded with filler)

- 20+ data points with named sources

- 3+ case studies or concrete examples with specific numbers

- Meets content quality standards

Platform-specific elements:

- Google: author bio with credentials, publication date, Article + FAQPage + Organization schema

- Perplexity: comparison table with numbers in every cell, 25+ FAQ entries, numbered step lists

- ChatGPT: narrative flow between sections, comprehensive edge-case coverage, 5+ external citations

- Claude: academic/research citations with named authors, balanced perspectives, limitation acknowledgments

Technical:

- Schema markup implemented and validated (Google Rich Results Test)

- Internal links to 5+ related content pieces

- External links to 5+ authoritative sources

- Page load under 2.5 seconds (Core Web Vitals)

- Mobile-friendly layout tested

Tracking:

- Cross-platform citation monitoring configured (Otterly.AI, Peec AI, or manual sampling)

- Referral traffic segments created in GA4 for each AI platform

- Monthly refresh calendar set for Perplexity freshness

- Quarterly refresh calendar set for all platforms

Case study: B2B SaaS multi-platform optimization

Company: A $10M ARR B2B SaaS platform selling project management software. Strong Google presence but invisible on AI search platforms.

Starting point (October 2025):

- Google organic: top 5 rankings for 30 target keywords, 12,000 monthly organic visits

- AI search citations: 0 tracked citations across Perplexity, ChatGPT, or Claude

- AI referral traffic: 0 (no tracking in place, minimal actual traffic)

What they changed:

Month 1: Research and restructuring

- Ran multi-SERP analysis for all 30 target keywords across four platforms

- Identified gaps: no comparison tables (Perplexity), no academic research citations (Claude), insufficient FAQ sections (all platforms), no schema markup beyond basic Article

- Restructured 10 priority pages with the universal content framework

Month 2: Platform-specific elements

- Added comparison tables with specific pricing, feature counts, and integration numbers to all 10 pages

- Expanded FAQ sections from 5-8 questions to 25+ per page

- Implemented FAQPage, Organization, and HowTo schema markup

- Added author bios with credentials and publication dates

- Cited 4 academic studies on remote work productivity (MIT Sloan, Harvard Business Review, Stanford WFH research, Gartner PM market guide)

Month 3: Measurement and freshness

- Set up Otterly.AI tracking for 100 prompts across all platforms ($189/month)

- Created GA4 referral segments for perplexity.ai, chat.openai.com, and claude.ai

- Updated all pricing tables and statistics with Q1 2026 data

- Published 3 new supporting cluster pages (pricing comparison, implementation guide, research roundup)

Results after 6 months (April 2026):

- Google organic: maintained top 5 rankings, 13,800 monthly visits (+15%)

- Perplexity citations: 180 tracked citations across target queries, 520 monthly referral visits

- ChatGPT citations: 95 tracked citations, 380 monthly referral visits

- Claude citations: 40 tracked citations, 170 monthly referral visits

- Total monthly traffic: 14,870 (up from 12,000)

- AI referral conversion rate: 12.8% (vs. 3.1% for Google organic)

- Pipeline attributed to AI search: $1.2M in influenced pipeline over 90 days

- SQL attribution from AI referrals: +63%

- Demo-to-close rate from AI-referred traffic: 18% higher than Google organic

The 2,870 additional monthly visits from AI platforms converted at 4x the rate of Google organic traffic. At their average deal size, the AI search pipeline paid for the entire content optimization effort in the first quarter.

Source: adapted from VisibleIQ's 2026 B2B SaaS AI search case study with additional platform-specific data.

What is changing in multi-platform GEO

Three developments will reshape multi-platform GEO over the next 12-18 months:

Google AI Mode is cannibalizing its own search results. 93% of AI Mode sessions produce zero clicks to external websites. Google AI Mode already has 100+ million users and is expanding to 53 languages and 40+ markets. Content teams need to optimize for citation within AI Mode responses, not just for organic click-through. This is a different optimization target than traditional SEO.

AI referral traffic is growing at 527% year-over-year (Semrush, 2026), but from a small base. AI referral traffic is 1.08% of all website traffic today, projected to reach 20-28% by end of 2026. Teams that build multi-platform GEO now are positioning for a traffic source that may represent a quarter of all referrals within 12 months.

Community content is outcompeting brand content for citations. Reddit, Quora, and forum threads capture 52.5% of AI citations vs. 47.5% for brand domains (Wellows, 2026). Brands need content that reads like expert community contributions, not marketing pages, to compete for citations. This means first-person experience, specific data, and honest assessments of tradeoffs.

Next steps

AI search is multi-platform and growing. Optimizing for Google only means missing Perplexity, ChatGPT, Claude, and emerging platforms where visitors convert at 4-5x higher rates.

Start with these three actions:

- Run a multi-SERP analysis for your top 10 keywords across Google, Perplexity, ChatGPT, and Claude. Document which sources get cited and what formats appear.

- Restructure your top 5 pages using the universal content framework. Add comparison tables, expand FAQ sections to 25+, implement schema markup, and include named-source statistics.

- Set up cross-platform tracking. Even a $29/month Otterly.AI plan gives you visibility into 15 tracked prompts across five platforms. Create GA4 referral segments for each AI platform's domain.

Build your research-first content strategy across all AI search engines. Use RankDraft's multi-SERP research tools to analyze citation patterns, identify gaps, and create content that gets cited everywhere.

Start your free research trial

Frequently asked questions

Q: Should I create separate content for each AI platform? A: No. Create one unified piece with platform-specific elements layered in. Separate content is 4x the production cost, creates duplicate content problems, and splits link equity. Add Perplexity comparison tables, Google schema markup, Claude academic citations, and ChatGPT narrative depth to the same piece. The extra effort is roughly 20%.

Q: Which AI platform should I prioritize? A: Prioritize by conversion value, not just traffic. ChatGPT drives 78% of AI referral traffic but Perplexity citations convert at 11x the rate of traditional organic search (Semrush, 2026). Google has the largest total reach. Claude drives the smallest volume but attracts high-intent B2B visitors. Start with Google + Perplexity + ChatGPT, then expand to Claude and emerging platforms.

Q: How do I track citations across all AI platforms? A: Use a dedicated GEO tracking tool. Otterly.AI ($29-489/month) tracks ChatGPT, Perplexity, Google AI Overviews, Copilot, and Gemini. Peec AI covers 10+ LLMs including Grok and Google AI Mode. For referral traffic, create platform-specific segments in GA4 filtering by referral source (perplexity.ai, chat.openai.com, claude.ai). See our AI citation tracking guide for the full setup.

Q: Does optimizing for all platforms mean writing generic content? A: The opposite. Multi-platform GEO requires more specific, more data-rich, and more thoroughly researched content than single-platform optimization. Claude requires academic citations. Perplexity requires specific numbers in every table cell. ChatGPT requires 3,000+ words of depth. Content that satisfies all three is better than content optimized for any single one.

Q: How often should I refresh multi-platform content? A: Match your refresh cadence to the most demanding platform. Perplexity's real-time index rewards monthly updates, so pricing tables, statistics, and FAQ sections should be refreshed monthly. Deeper content analysis, case studies, and narrative sections can be refreshed quarterly. During each refresh, update all platform-specific elements. Our content refresh guide covers prioritization in detail.

Q: What is the ROI of multi-platform GEO vs. Google-only optimization? A: AI search visitors convert at 4.4x the rate of traditional organic visitors (14.2% vs. 2.8%, per Semrush 2026). Brands cited in AI answers see a 156% increase in branded search queries. A B2B SaaS company in VisibleIQ's 2026 case study generated $1.2M in influenced pipeline from AI search in 90 days. The 20% additional effort for multi-platform optimization produces outsized returns because AI referral traffic is higher-intent.

Q: How do emerging platforms like Meta AI and Grok affect my strategy? A: Meta AI has 1 billion+ MAU but does not currently drive significant referral traffic to external websites. Grok has ~30 million MAU with a real-time X data advantage. Neither platform has mature citation tracking tools yet. Optimize for the big four (Google, Perplexity, ChatGPT, Claude) first, and monitor emerging platforms quarterly. Content optimized for the universal framework will naturally perform well on new platforms as they mature.

Q: Can existing content be retrofitted for multi-platform GEO? A: Yes. Start with your highest-traffic pages. Add comparison tables, expand FAQ sections, implement schema markup, and include named-source statistics. The content decay detection process can identify which pages to prioritize for retrofitting based on declining performance signals.

Q: How does topical authority affect multi-platform citations? A: Otterly.AI's data shows that when one page on a domain gets cited, other pages on that domain are more likely to appear in related queries across all platforms. Building content clusters with strong internal linking amplifies this effect. A domain with 20 interlinked articles on CRM software is more likely to get cited across platforms than a domain with a single CRM guide.

Q: What is the biggest mistake teams make with multi-platform GEO? A: Allocating 100% of optimization effort to Google because it has the most traffic. Google sends 190x more referral traffic than ChatGPT, but ChatGPT referral visitors convert at 15.9% vs. 2.8% for Google organic. Teams that ignore AI platforms miss visitors worth 4-5x more per session. The second biggest mistake is not tracking: only 12% of marketing teams have a documented GEO strategy (Incremys, 2026).